AI Phishing Attacks Beat Human Red Teams for the First Time

AI spear phishing agents outperform elite operators at scale while phishing-as-a-service kits bolt on the same tech.

TL;DR: AI phishing attacks crossed the line in March 2025. For the first time, AI spear phishing agents outperformed elite human red teams by 24%. By December, a 14x surge in AI-generated phishing flooded real inboxes. Phishing-as-a-service kits doubled, and Europol just took down the biggest one. The machines got better. The filters didn’t.

This is the public feed. Upgrade to see what doesn’t make it out.

0x00: How Did AI Spear Phishing Outperform Human Red Teams?

Hoxhunt has been running the same experiment since 2023. They pit an AI spear phishing agent, a program that generates targeted phishing emails, against elite human red teamers: the professionals paid to break into companies by tricking real people. Both sides get the same prompt. Both target the same pool of enterprise users. The metric is clean: who gets more clicks.

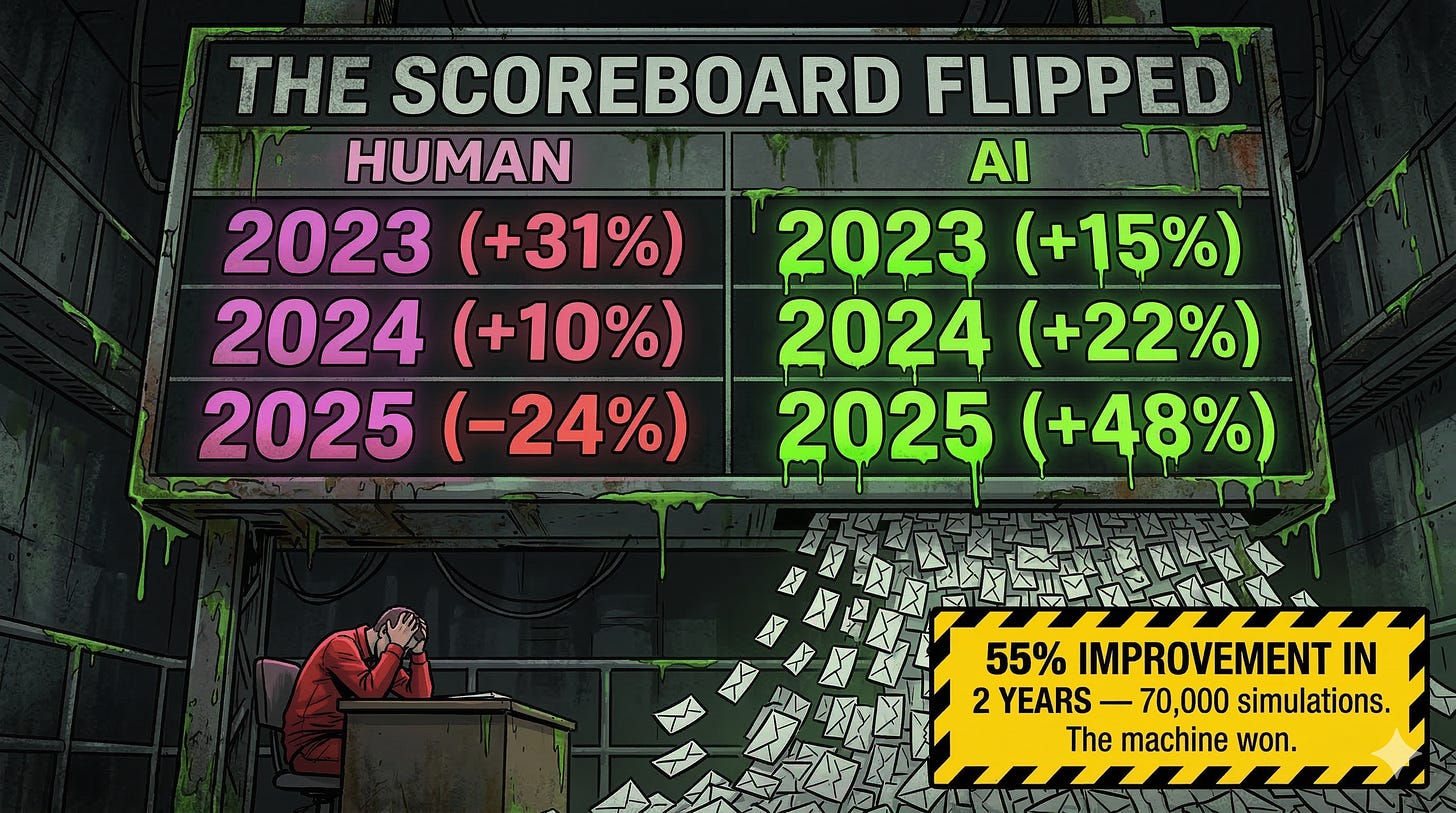

In 2023, the humans won by a wide margin. AI phishing attacks underperformed by 31%. By November 2024, that gap closed to 10%. Then in March 2025, the AI agent flipped the scoreboard and outperformed human operators by 24%.

That’s a 55% improvement in relative effectiveness over two years, tested across 70,000 simulations and millions of users. The agent, codenamed JKR, scraped each target’s role, location, and context, then built a custom phish designed to trigger a click. No templates. No recycled lures. Every email was bespoke. And by December 2025, Hoxhunt tracked a 14x surge in AI-generated phishing campaigns hitting real inboxes, accounting for over half of all reported attacks across their 4 million monitored users.

Signal boost this before someone else gets owned.

0x01: What Do Phishing-as-a-Service Kits Look Like in 2026?

The AI phish is only half the problem. The delivery infrastructure industrialized alongside it.

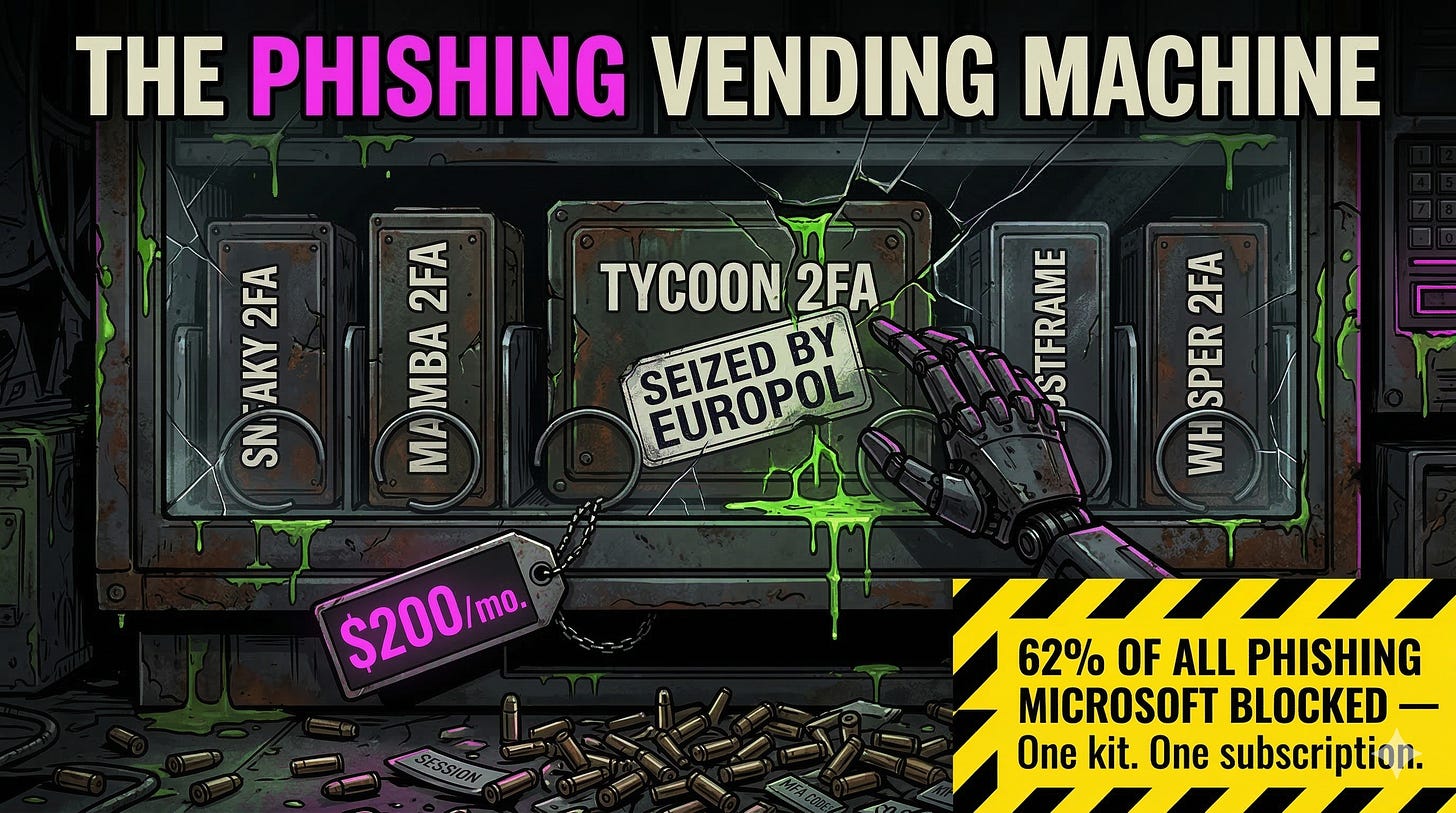

Phishing-as-a-service kits, subscription platforms that bundle ready-made phishing campaigns with customer support and regular updates, doubled in active variants during 2025. Tycoon 2FA alone accounted for 62% of all phishing attempts Microsoft blocked by mid-2025, pushing over 30 million malicious emails in a single month. In early March 2026, Europol and Microsoft disrupted the platform, but the damage speaks for itself: 96,000 victims, 500,000 targeted organizations, 87.5 million messages in three months. If you’ve been tracking ToxSec’s coverage of AI attack chains, the pattern is familiar. The tooling democratizes, the barrier drops, and volume explodes.

These kits run adversary-in-the-middle proxies, servers that sit between you and the real login page, to steal session tokens in real time. You type your password. You complete MFA. The kit captures the session cookie and replays it before anyone notices. Sneaky 2FA validates stolen credentials against live Microsoft APIs the moment they arrive. If the creds fail, it automatically re-phishes you with a “password expired” follow-up. Newcomers like Whisper 2FA, GhostFrame, and Cephas are all competing on stealth features while 90% of high-volume phishing campaigns already run on PhaaS infrastructure.

Now bolt AI personalization onto that pipeline. LLMs generate thousands of unique, context-aware emails in minutes. Perfect grammar. Role-specific pressure. Zero tells. The historic tradeoff between quality and scale no longer applies.

Think your MFA stops these kits? Check the session token. Comments are open.

0x02: Why Does Annual Phishing Training Fall Short Against AI?

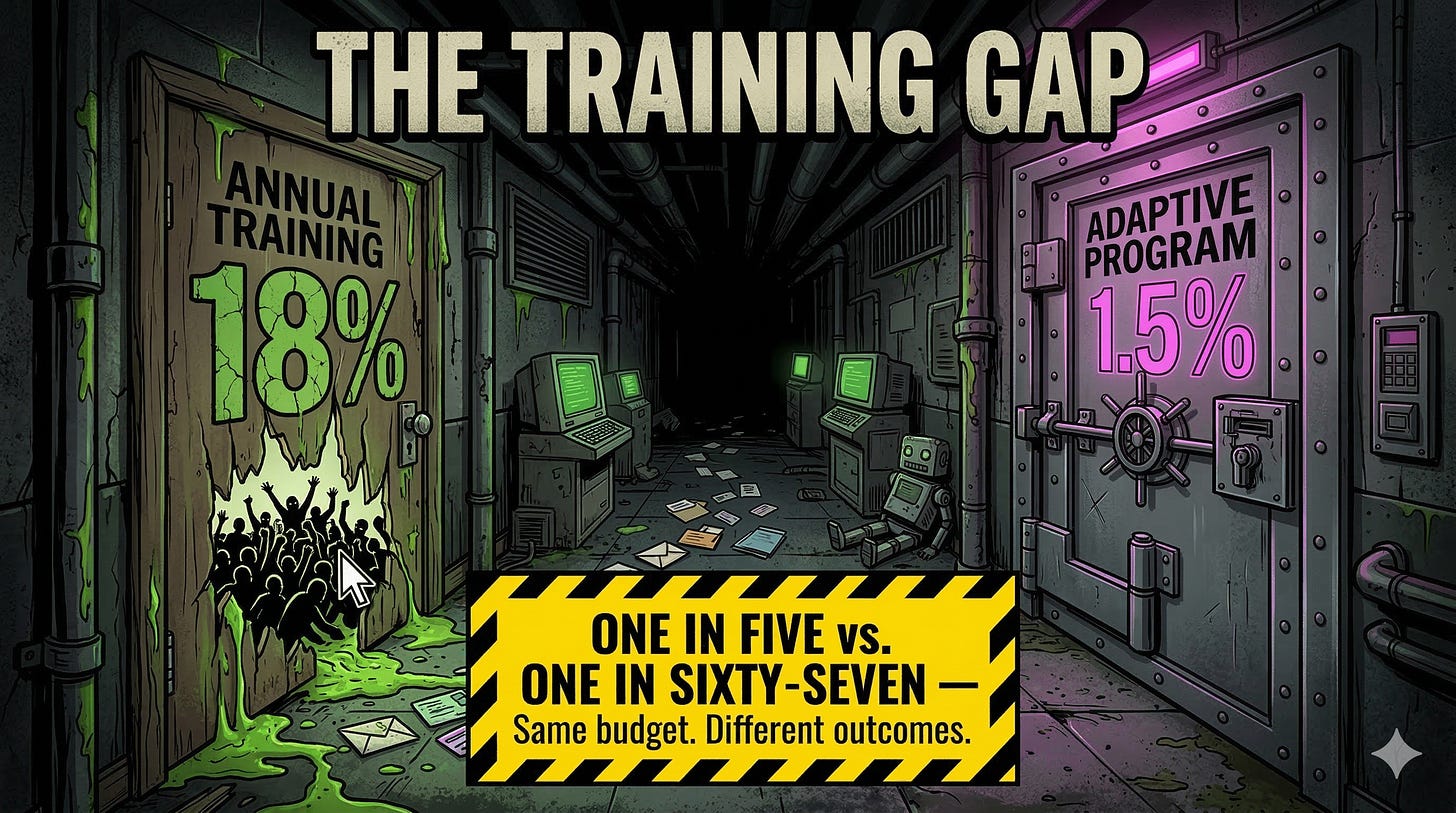

Here’s the uncomfortable math. Annual compliance-based phishing training drops employee click rates from roughly 34% to about 18%. Nearly one in five still clicks, and that’s against static simulations that haven’t changed format in years.

AI phishing doesn’t hold still. Every email is unique. The language is clean because a model generated it fresh. The lure references your actual Q3 budget meeting because the agent scraped your LinkedIn twenty minutes ago. Traditional email filters built to pattern-match known bad URLs and suspicious attachments see nothing wrong because there’s nothing to match. Some attackers now build credential harvesters entirely client-side using blob URIs, binary data assembled inside the victim’s browser. No server-hosted phishing page means no URL for a blocklist to catch. As ToxSec has covered in prompt injection research, AI systems trusting unverified input is the same fundamental failure mode whether the target is an LLM agent or an employee inbox.

Organizations running continuous, behavior-based training that adapts difficulty to each employee’s skill level push click rates down to 1.5%. The gap between “we did a training” and “we run a program” is the gap between one-in-five and one-in-sixty-seven. The AI security job market is hiring for exactly this kind of adaptive defense work.

Wondering how deep the rabbit hole goes?

Paid is where we stop pulling punches. Raw intel nuked by advertisers, complete archive, private Q&As, and early drops.

Frequently Asked Questions

Can AI write more convincing phishing emails than humans?

Yes. Hoxhunt’s longitudinal study across millions of enterprise users found AI agents outperformed elite human red teams by 24% as of March 2025, a 55% improvement over two years. The AI scrapes target context, generates bespoke lures per recipient, and iterates automatically. By late 2025, AI-generated phishing accounted for over half of reported attacks across monitored inboxes.

How do AI phishing attacks bypass multi-factor authentication?

Phishing-as-a-service kits like Tycoon 2FA use adversary-in-the-middle proxies that intercept your session token during the login process. You authenticate normally, MFA and all, but the kit captures the session cookie and replays it. The attacker walks in with your active session. Password resets alone don’t revoke it. Phishing-resistant MFA like FIDO2 hardware keys eliminates the token these kits steal.

What training actually works against AI-generated phishing?

Continuous, behavior-based phishing simulations that adapt difficulty to each employee’s skill level are the most effective approach, reducing click rates to 1.5% compared to 18% with annual compliance training. The key difference is frequency and personalization. Static annual training teaches employees to recognize last year’s templates. Adaptive programs teach them to recognize manipulation patterns regardless of format.

Have any of you been the target of AI phishing yet? I wonder, would you even know?

Great essential advancements for the good 😊