Model Denial of Service Turns Your Cloud Bill Into a Weapon

LLM unbounded consumption, denial of wallet attacks, and why traditional rate limiting can’t save your AI budget

TL;DR: Model denial of service is the fastest way to turn someone else’s AI infrastructure into a money fire. Attackers don’t need to crash a server. They run your API bill into six figures while you sleep, and your cloud provider will happily charge you for every token.

This is the public feed. Upgrade to see what doesn’t make it out.

0x00: What Is Model Denial of Service and Why It Costs Six Figures

Traditional denial of service floods a server until it falls over. Model denial of service keeps the server running while the bill explodes. OWASP originally cataloged this as LLM04: Model Denial of Service. In their 2025 update, they expanded it into LLM10: Unbounded Consumption, because the attack surface grew beyond simple crashes into cost, intellectual property theft, and service degradation.

The shift happened for a reason. Every major LLM provider, OpenAI, Anthropic, Google, AWS Bedrock, charges per token: a token being the basic unit of text the model processes, roughly a word or word-piece. Every query your app handles burns tokens. Every token costs money. An attacker who forces the model to chew through millions of tokens isn’t disrupting service. They’re looting your cloud account. And the rise of AI agent black markets means stolen credentials find buyers fast.

Signal boost this before someone else gets owned.

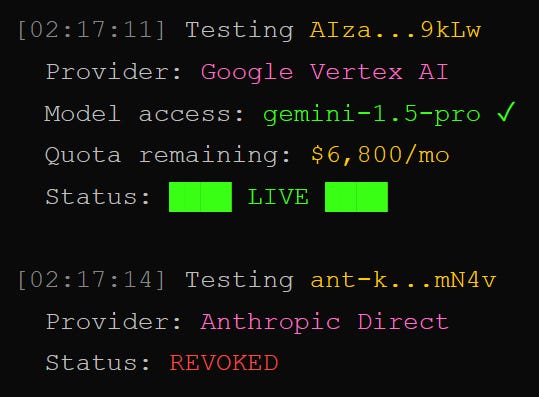

0x01: How Denial of Wallet Drains an AI Budget in Hours

The real kill shot has a name: Denial of Wallet (DoW). Unlike a classic DoS that aims for downtime, DoW weaponizes your own cloud bill against you. The attacker stays under your rate limits, avoids setting off availability alarms, and quietly maxes out your token spend.

The techniques are straightforward. Context window flooding pushes inputs right up against the model’s processing limit, forcing expensive computation on every request. Recursive prompting crafts inputs where the model’s output feeds back as input, creating exponential token growth. Reasoning loop exploitation targets chain-of-thought models, models that work through a problem step by step before answering, by tricking them into extended internal processing that burns thousands of output tokens from a single request.

Then there’s LLMjacking. Sysdig’s Threat Research Team documented attackers stealing cloud credentials and hijacking LLM services on AWS Bedrock. Worst case: over $46,000 per day in consumption costs for the victim. In March 2026, a developer posted on Reddit about an $82,000 Gemini API bill racked up in 48 hours from a single stolen key. Google cited their shared responsibility model. Payment was due. Entro Labs ran a sting operation, leaking AWS API keys on GitHub, Pastebin, and Reddit. Attackers validated and began exploiting those keys in as little as nine minutes. Stolen LLM credentials now sell for $30 on underground forums.

How fast would your team notice a 4,000% spike in token usage at 2 AM?

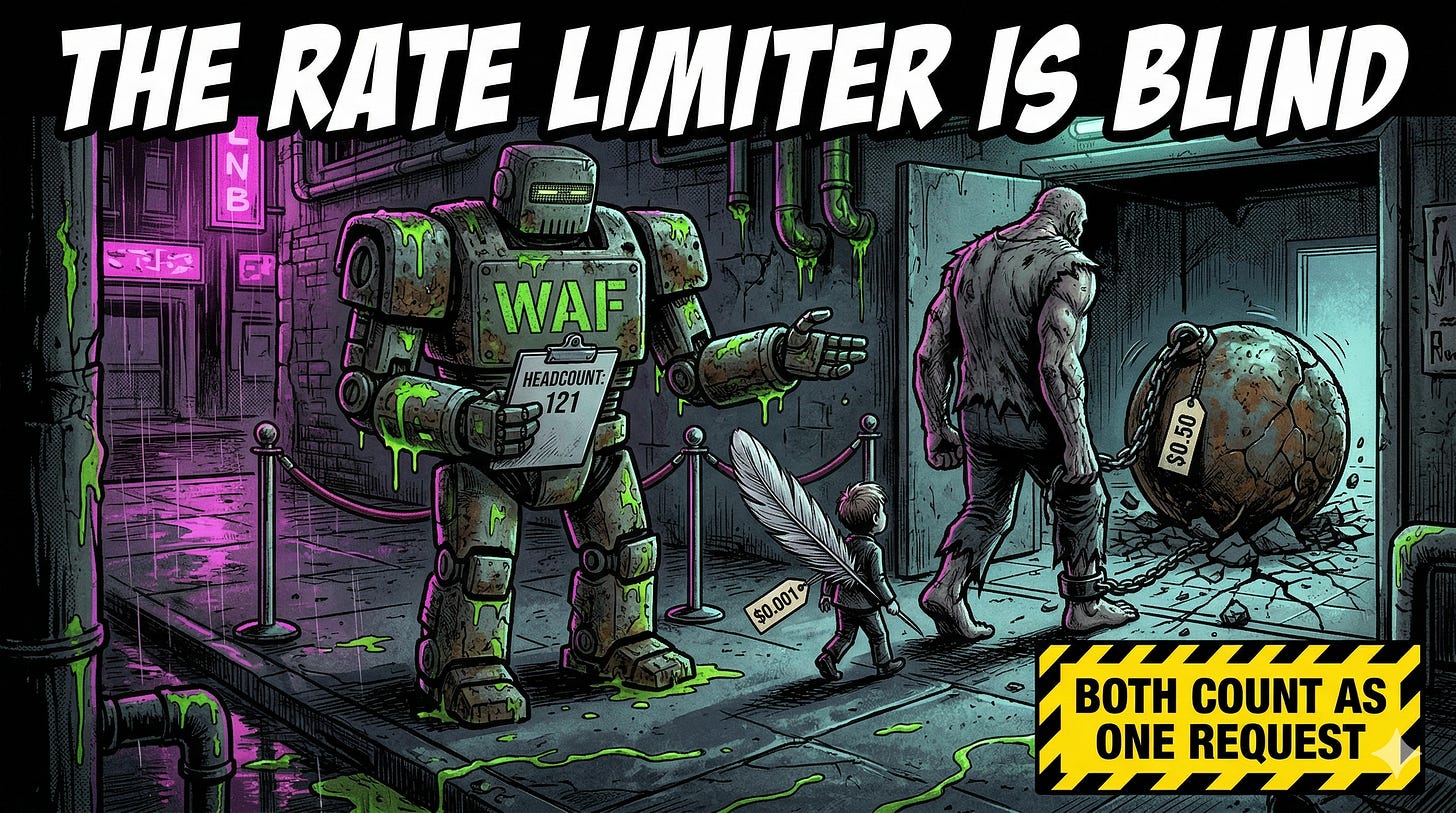

0x02: Why Standard Rate Limiting Fails Against LLM Token Abuse

Here’s the gap. Your WAF, the web application firewall sitting in front of your web traffic, counts requests per second. Your API gateway enforces rate limits per user. Both are built for the old model where one request costs roughly the same as any other request.

LLMs break that assumption completely. One request can cost $0.001 if it hits a cache. The next can cost $0.50 if it triggers a multi-step agentic workflow, an AI agent that calls other AI tools to complete a task. Both count as one request. Your rate limiter sees identical traffic. Your bill sees a 500x cost difference. This is the same class of blind spot that lets MCP tool poisoning slip past conventional defenses, and exactly why securing your MCP server matters before the bill arrives.

Cost-aware rate limiting, throttling based on token consumption instead of request count, is the defense most teams haven’t deployed. Without it, an attacker who figures out which prompts trigger the most expensive execution paths can drain your budget while staying comfortably under every traditional rate limit you’ve set. If your AI security checklist doesn’t include hard spending caps and billing anomaly alerts, you’re running exposed.

Wondering how deep the rabbit hole goes?

Paid is where we stop pulling punches. Raw intel nuked by advertisers, complete archive, private Q&As, and early drops.

Frequently Asked Questions

What is the difference between model denial of service and denial of wallet?

Model denial of service crashes or degrades an AI system by overwhelming its compute resources. Denial of wallet keeps the system running perfectly while draining the cloud budget through excessive token consumption. OWASP folded both into “Unbounded Consumption” (LLM10:2025) because the attack surface now includes availability, cost, and model theft in a single risk category.

How much can an LLM denial of wallet attack actually cost?

Real-world incidents show costs from $46,000 per day (Sysdig’s LLMjacking research on AWS Bedrock) to $82,000 in 48 hours (a stolen Google Gemini API key reported in March 2026). Costs scale with the model’s per-token pricing, available quota limits, and how many regions the attacker hits simultaneously. Stolen credentials sell for as little as $30, making the ROI obscene for the attacker.

Can a WAF or standard rate limiter prevent LLM denial of service?

Standard rate limiters count requests, not cost. An attacker can stay under your request limit while triggering the most expensive execution paths available. Effective defense requires cost-aware rate limiting that tracks token consumption per user, hard spending caps on cloud accounts, and billing anomaly alerts that flag usage spikes before they become six-figure invoices.

Don't get hit with a five figure bill because of an API key. Denial of Wallet is a new trend we are seeing from attackers. Vibe coders and enterprise are both valid targets.

I’ve been saying it for a while.

Cost-aware rate limiting is essential. This concept is applicable in so many areas, even outside of AI. You should always have a limit on things that drain your budget.

Great post!