Shadow AI Turns Employees Into Your Biggest Data Leak

40% of AI interactions now carry sensitive corporate data while 82% of organizations can't see it happening

TL;DR: Shadow AI, the unsanctioned AI tools your workforce uses behind IT’s back, now drives 53% of insider risk losses. IBM pegs the breach premium at $670,000. Cyberhaven says employees feed sensitive data into these tools once every three days. Your DLP sees none of it. The exfiltration looks like typing.

This is the public feed. Upgrade to see what doesn’t make it out.

Your DLP Stack Watches Files While Data Walks Out the Browser

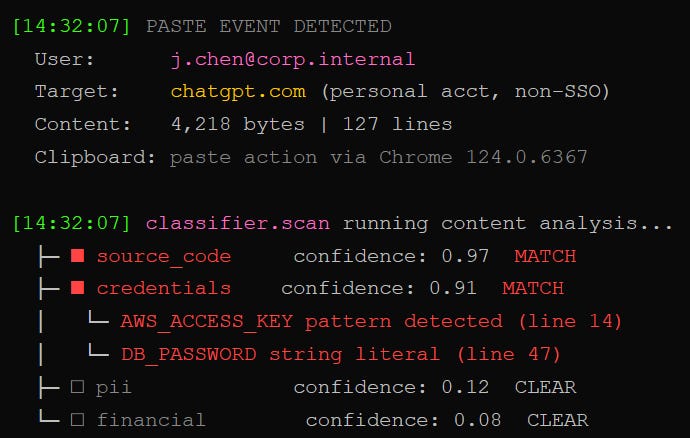

We pulled the data movement logs on a mid-size enterprise last quarter. Every DLP policy firing, every CASB rule humming along, every firewall exception tuned to approved channels. Clean bill of health. Then we looked at what employees actually pasted into ChatGPT on Tuesday.

DLP (data loss prevention) is the software that watches for sensitive files leaving the building through email, USB drives, or cloud uploads. It flags attachments, scans transfers, catches the obvious exits. The problem: DLP was built for a world where data leaves in files. Shadow AI broke that assumption completely.

Cyberhaven tracked data flows across 222 enterprises in their 2026 AI Adoption & Risk Report. 39.7% of all employee interactions with AI tools involve sensitive data. Source code. R&D materials. Sales projections. Customer records. The average employee feeds proprietary information into an AI tool once every three days. To DLP, every single one of those events looks like someone typing into a website. No file transfer. No attachment. No flag.

A third of all ChatGPT usage still runs through personal accounts, completely outside corporate SSO and logging. The security operations center monitors approved channels where data stopped traveling two years ago.

How Invisible Data Loss Compounds to $19.5 Million

The first hit lands quiet. One engineer pastes a database schema into ChatGPT to debug a query. One analyst uploads a competitive analysis to get a summary. Each event looks harmless in isolation. We stack them.

DTEX and the Ponemon Institute published the 2026 Cost of Insider Risks Report in February. The headline number: $19.5 million per year in average insider risk costs for organizations with 500+ employees. Up 20% since 2023. Negligent, non-malicious incidents, the category shadow AI lives in, drove $10.3 million of that total. Fifty-three percent. The single biggest slice. Up 17% year over year.

IBM’s 2025 Cost of a Data Breach Report sharpens the picture. Organizations with high shadow AI usage pay $670,000 more per breach than those without. One in five organizations traced a breach directly to shadow AI. Of those breached organizations, 97% lacked proper AI access controls. Ninety-seven percent.

Only 18% of organizations have fully integrated AI governance into their insider risk programs. Meanwhile, 73% of IT security professionals told DTEX they believe unauthorized AI use is creating invisible data exfiltration paths. They know the water’s rising. They just can’t find the leak.

How We Kill the Paste Pipeline With Sanctioned AI

We deploy the same class of tool that exposed the problem in the first place. Data lineage, the ability to trace every piece of information from creation through every copy, paste, and upload, is the first control that actually works against shadow AI.

Step one: sanctioned enterprise AI. Private deployment. Zero training on user inputs. Data retention policies the organization actually controls. When employees have a corporate AI tool that does the job, shadow AI usage collapses. One healthcare system reported an 89% reduction in unauthorized AI use after rolling out approved alternatives, plus 32 minutes of daily time saved per clinician. Employees don’t use shadow AI out of rebellion. They use it because the sanctioned tools either don’t exist or don’t work.

Step two: AI-aware DLP. Traditional DLP watches file transfers. AI-aware DLP watches paste actions, prompt submissions, and context window interactions. It flags when someone pastes source code into a consumer ChatGPT session and intercepts before the data hits the API. Only 17% of organizations have these controls today. We join that 17%.

The cost math isn’t complicated. Enterprise AI licenses run a fraction of the $670,000 shadow AI breach premium. The MCP security hardening playbook we covered last month applies the same principle to AI agent deployments: scope the blast radius, verify what you load, sanitize what you render.

Why Banning AI Makes It Worse (And What Actually Works)

Blocking websites doesn’t work. DTEX documented it in their 2026 report: when enterprises restricted ChatGPT, employees migrated to Gemini, Perplexity, and agentic AI browsers. Prohibition drives shadow AI underground. Bans make the problem harder to see.

We deploy behavioral intelligence instead. User behavior analytics (UBA), software that learns normal patterns and flags anomalies, catches the outlier activity that keyword filters miss: the employee who starts pasting financial projections into a new AI tool at 11 PM, or the developer uploading architecture diagrams to an unsanctioned coding assistant. The DTEX report shows organizations using behavioral monitoring reduced containment time from 86 days to 67 days. Mature insider risk programs prevented at least seven incidents per year, saving $8.2 million in breach costs.

The governance piece: we write the AI policy that 63% of organizations still don’t have. We build an approval process for AI tool deployments. We classify AI agents as insiders, because they operate with delegated authority, persistence, and reach that matches or exceeds a human employee. Only 19% of organizations do this today. That gap is where the next wave of data loss lives. And as our coverage of AI agent security risks has shown, the agents themselves carry a whole separate attack surface that most governance frameworks haven’t caught up to.

The Data Left Voluntarily

Your workforce optimized for speed. Your security stack optimized for files. That mismatch costs $19.5 million a year, and 82% of organizations still can’t see it happening.

Paid unlocks the unfiltered version: complete archive, private Q&As, and early drops.

Frequently Asked Questions

What is shadow AI and why does it bypass traditional DLP?

Shadow AI is any AI tool employees use without IT approval. Think personal ChatGPT accounts, browser-based AI assistants, or unsanctioned coding tools. Traditional DLP monitors file transfers and email attachments. Shadow AI exfiltration happens through paste events and prompt submissions inside HTTPS sessions, which DLP treats as normal web browsing. Cyberhaven’s 2026 data shows 39.7% of these interactions carry sensitive data.

How much does a shadow AI data breach actually cost?

IBM’s 2025 Cost of a Data Breach Report found that organizations with high shadow AI usage paid $670,000 more per breach than organizations without. DTEX’s 2026 Insider Risk Report puts the broader damage at $19.5 million per year in total insider risk costs, with non-malicious negligence (the category shadow AI falls into) accounting for $10.3 million of that total.

Does blocking ChatGPT stop shadow AI data leaks?

No. DTEX’s research confirms that blocking popular AI tools just pushes employees to alternatives: Gemini, Perplexity, agentic AI browsers, and open-source models. The effective approach is deploying sanctioned enterprise AI tools that meet employee needs, paired with AI-aware DLP that monitors paste events and prompt submissions in real time.

ToxSec is run by an AI Security Engineer with hands-on experience at the NSA, Amazon, and across the defense contracting sector. CISSP certified, M.S. in Cybersecurity Engineering. He covers AI security vulnerabilities, attack chains, and the offensive tools defenders actually need to understand.

This is absolute insanity on the behalf of these organisations. A lot of this could be easily addressed with clear governance, proper training, and SLMs with proper guardrails. But I guess none of that is particularly revolutionary so is unlikely to be taken up. Thanks again for highlighting such a serious issue with such great clarity. 🙏

This is a sign that organizations are slow to keep up with employee needs. In the same way infosec needs to be a partner to be effective, AI governance need to empower people, not show them down.

That's the hard part, especially when companies are not setup to evolve as quickly as the environments where the operate.

Does anyone else see parallels between security control implementation and AI governance?