Token-Level AI Security: The Opus 4.7 Tokenizer Graveyard

A new tokenizer ships fresh dead zones, and every model now carries a graveyard of glitch tokens nobody has mapped yet.

TL;DR: Claude Opus 4.7 shipped April 16 with a new tokenizer. Token counts jumped 1.0 to 1.35x, sometimes higher in the wild. Everyone’s fighting about pricing. Token-level AI security has a quieter question: every new tokenizer ships with a fresh graveyard of glitch tokens, and nobody has mapped this one yet.

This is the public feed. Upgrade to see what doesn’t make it out.

What Is Token-Level AI Security?

Alright. Token-level AI security starts with the plumbing underneath every language model. That plumbing is where a surprising amount of attack surface lives, and Opus 4.7 just changed it.

A tokenizer is the thing that turns text into numbers. You type “hello world,” and before the model sees anything, that string gets chopped into a handful of tokens. Each token maps to an entry in a fixed vocabulary, usually around a hundred thousand slots, with each slot pointing to a vector the model actually reasons over.

No tokens, no math. No math, no model.

Most modern systems use a flavor of byte-pair encoding, BPE for short. BPE starts from individual characters and greedily merges the most common pairs into longer tokens until the vocabulary hits the target size. The exact list of merges decides how every input text gets sliced, and that slicing is what the model sees. Change the tokenizer and you change the model’s eyeballs.

Token-level AI security is the art of messing with that slicing. Keyword filters, safety classifiers, prompt injection detectors, they all operate on tokens or on strings that assume a particular tokenization. Break that assumption and you break the filter.

import tiktoken

enc = tiktoken.get_encoding("cl100k_base")

text = "hello world"

ids = enc.encode(text)

for tid in ids:

print(f"{tid:>6} {enc.decode([tid])!r}") 15339 'hello'

1917 ' world'Glitch Tokens and the Dead Zones in Every Vocabulary

Here’s where it gets fun. A tokenizer gets built from one giant text corpus. The model gets trained on a different one. Those two corpora don’t always match.

A string can show up in the tokenizer corpus a million times and never appear once in the training data. When that happens, the vocabulary slot exists, but the embedding behind it is basically untouched noise. Dead on arrival.

In 2023, researchers documented a whole class of these and nicknamed them glitches. The canonical example is SolidGoldMagikarp. Somebody on the counting subreddit had spent years posting sequential numbers, and that username got slurped into the GPT-2 tokenizer corpus. The training data scraper skipped the forum itself. So the model shipped with a token for SolidGoldMagikarp whose embedding had never learned what that word meant.

Prompt GPT-2 or GPT-3 with the string and you’d get denial, hallucination, insults, gibberish, or a flat refusal. The token pointed nowhere useful and the model would fumble around trying to talk about something it couldn’t see.

There’s a whole zoo of these: petertodd with a leading space, davidjl123, TheNitromeFan, a handful of cursed gaming forum artifacts. Researchers have been hunting them down systematically. A 2024 paper called GlitchHunter found nearly eight thousand of them scattered across seven major LLMs.

Glitch tokens have been a documented filter bypass primitive for years. A keyword filter that looks for “bomb” doesn’t match if the BPE slicing routes around the word, and a weirdly tokenized input does exactly that on a fresh vocabulary.

What Changed With Opus 4.7’s New Tokenizer?

Anthropic shipped Claude Opus 4.7. The release notes led with benchmarks, the new xhigh reasoning mode, and a quiet flag that the tokenizer had changed.

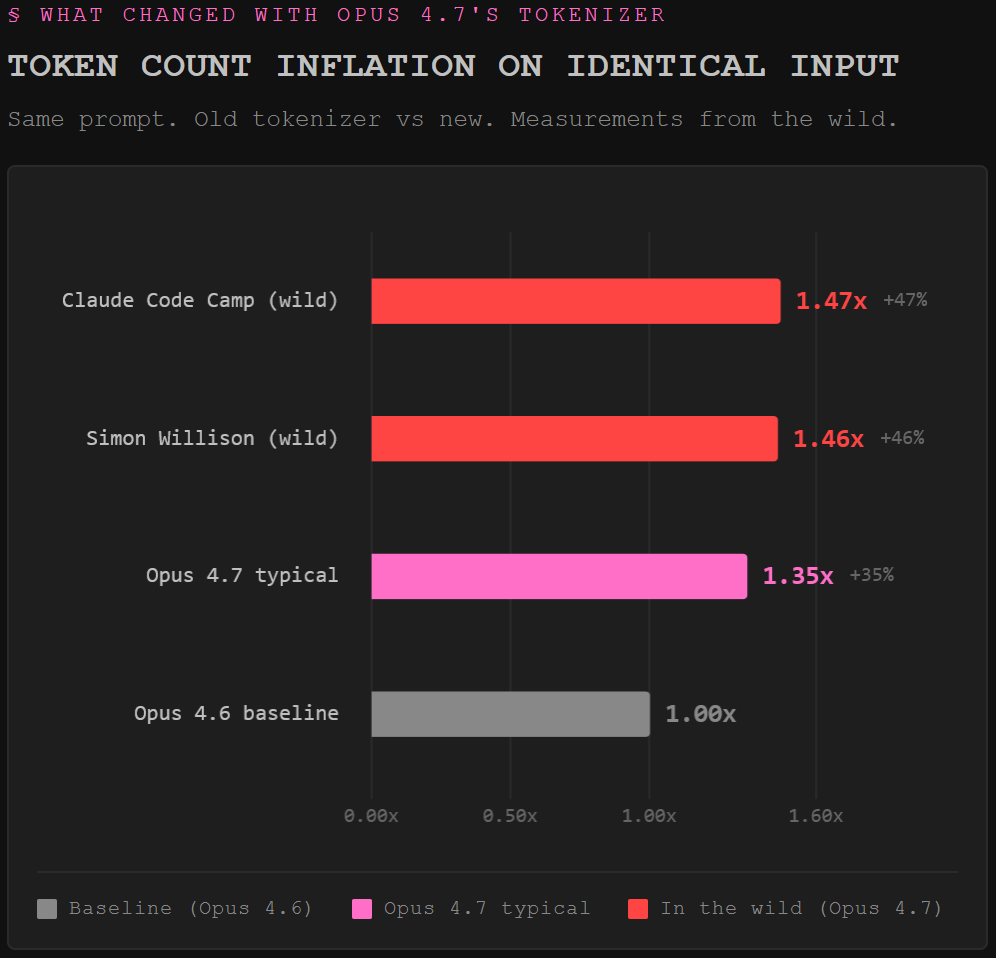

Token counts jumped anywhere from one to one point three five times on the same input. In the wild, Simon Willison got one point four six and Claude Code Camp hit one point four seven. Everybody reasonably freaked out about pricing.

For the security side of the house, a new tokenizer is a different kind of earthquake.

A fresh vocabulary means a fresh set of dead zones. Every weird Reddit username, every scraped forum artifact, every near-duplicate of a special token that slipped into the new BPE merges is a candidate glitch.

As of today, no academic team has published a full glitch sweep against Opus 4.7’s vocabulary. The current state of the art at AAAI 2026 was evaluated on the old tokenizer. The map is blank.

And that’s just the untrained vectors. Safety classifiers, output regex filters, and moderation APIs often assume the old tokenization. Prompt caches are partitioned per model, so detection logic that relied on cached patterns is cold.

The documented QA string that bricks Claude was a single tokenized sequence. What other single sequences produce weird, untested behavior under the new vocabulary? Nobody has swept for them yet.

Anthropic’s pitch for the tokenizer change is “more literal instruction following.” Smaller tokens, the argument goes, force attention over individual words. Maybe that helps alignment on well-lit inputs. It also means the edge cases get their own vector slots: weird near-misses, half-broken merges, strings that tokenize one way in the classifier and a different way in the model. Each one has its own separate behavior.

The Threats Worth Watching on the New Surface

A few classes of attack get a fresh coat of paint on Opus 4.7, and if you’re red teaming right now they’re worth your attention.

Tokenization-mismatch filter bypass is the classic. HiddenLayer’s TokenBreak research showed that changing “instructions” to “finstructions” was enough to slip past a BPE-based safety classifier while the target model still understood the manipulated text perfectly. New tokenizer, new BPE merge table, new set of strings that tokenize weirdly on the classifier but sensibly on the model. Every permutation has to be re-tested.

Special token smuggling gets a fresh lane. Every new tokenizer has near-misses of the real chat template markers. If the new vocabulary has slots that look close to the role separator but aren’t quite, that gap becomes a place to smuggle. This is the family that stacks with encoding to bypass filters in the long tail.

Classifier desync is the sneaky one. Moderation APIs, output scanners, policy filters. Any middleware trained against the old tokenization now sees Opus 4.7 output through a slightly warped lens. The model wrote one thing, the classifier read a different thing, the decision gets made on the gap. Quietly wrong is the most dangerous kind of wrong.

The AI kill chain framework maps these token-level abuses into real attack chains.

Here’s the thing that gets me. Nobody who’s flipped a prod workload to Opus 4.7 this week has done the token-level red team pass yet. They flipped the model ID, maybe re-tuned a prompt or two, and shipped. The poetry-class jailbreaks already land on frontier models at rates well above what anybody expected. Token-class attacks against an unmapped vocabulary are the next punch, and the public hasn’t seen the one that lands yet.

Paid unlocks the unfiltered version: complete archive, private Q&As, and early drops.

Frequently Asked Questions

What is token-level AI security?

Token-level AI security is the attack and defense surface underneath normal prompt injection. Every LLM converts text into tokens before the model reasons about anything, and every safety filter reads those tokens or the strings they came from. Token-level AI security covers how attackers manipulate the tokenizer boundary to bypass filters, trigger glitch behaviors, or desync safety classifiers from the model itself.

Why does a new tokenizer create security risk?

A new tokenizer means a new vocabulary, new merges, new embeddings, and a new set of untrained vector slots. Every safety classifier, every regex-based output filter, every moderation API tuned to the old tokenizer now operates on slightly different inputs. Keyword filters that caught specific strings last week may not slice the same way this week. Glitch tokens are fresh and unmapped. The detection surface resets.

Are glitch tokens a real exploit or just a curiosity?

Both. They were discovered as a curiosity when researchers noticed GPT-2 losing its mind over SolidGoldMagikarp. They matured into a documented filter-bypass primitive when projects like GlitchHunter, GlitchMiner, and TokenBreak showed you can use tokenization weirdness to sneak payloads past safety classifiers while the target model still understands the intent. For any new tokenizer, including the one shipping with Opus 4.7, the hunt for new glitches is the first move.

ToxSec is run by an AI Security Engineer with hands-on experience at the NSA, Amazon, and across the defense contracting sector. CISSP certified, M.S. in Cybersecurity Engineering. He covers AI security vulnerabilities, attack chains, and the offensive tools defenders actually need to understand.

Why does the system allow meaning to collapse while remaining structurally valid?

Great article! I must admit this isn’t my area of expertise, and the problem is clearly described in a way I could easily understand!