How to Jailbreak Claude Opus 4.7: A Bug Bounty Field Guide

Five jailbreak families, the tools bounty hunters actually use, and the mindset that turns a prompt into a payday.

TL;DR: Anthropic shipped Claude Opus 4.7 on April 16. It’s the first public Claude model with Mythos-derived cyber safeguards baked in, including an auto-blocking classifier and deliberately reduced cyber capabilities from training. Which means new alignment, new attack surface, and bounty hunters circling. We walk through the five attack families, the automated tooling real bounty hunters load up, and the red team mindset that turns taxonomy into results. The working attack templates and recent bounty-winning techniques are behind the wall.

⚠️ This is for bounty hunters with scope and a HackerOne handle. If you point this at something you're not authorized to test, you're on your own.

This is the public feed. Upgrade to see what doesn’t make it out.

Why Opus 4.7 Is the New Target

So Anthropic just shipped Opus 4.7. Generally available across Claude, the API, Bedrock, Vertex, and Foundry, same $5/$25 per million tokens as 4.6. On paper it’s a coding upgrade. Better at SWE-bench. Better vision. A new “xhigh” reasoning mode.

Here’s what matters for us. Opus 4.7 is the first publicly available Claude that ships with cyber guardrails derived directly from Project Glasswing and the Mythos Preview work. Anthropic was explicit in the release notes. During training, they deliberately suppressed cyber capabilities. At inference, they layered in a classifier that automatically detects and blocks prompts flagged as prohibited or high-risk cybersecurity uses. And for legitimate work, they spun up a brand new Cyber Verification Program you have to apply to.

Anthropic built the first consumer-facing Claude model that is actively trying to not help you break things. That’s a new, untested alignment layer sitting on top of every prompt you send. Which makes right now the richest attack surface on the market.

So let’s talk about how you probe it.

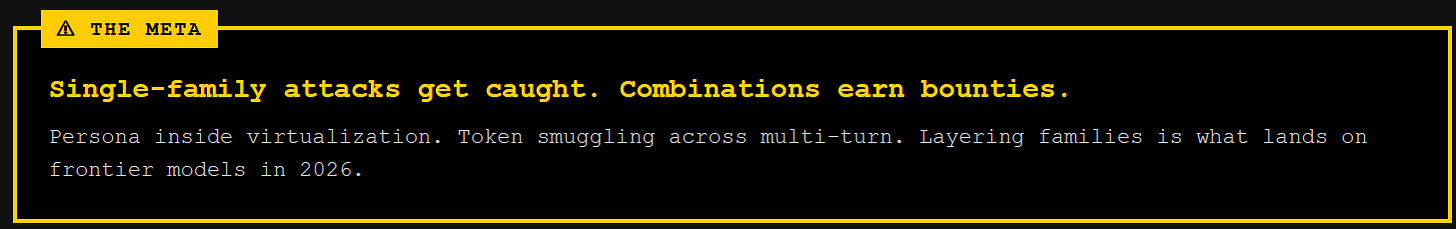

The Five Families: What’s Dead, What Still Lands, and Why

Every prompt-level jailbreak falls into one of five families. Some red teamers will argue the edges, but this taxonomy covers the attack surface that matters. Here’s each one with the 2026 meta, not the 2023 tutorial version.

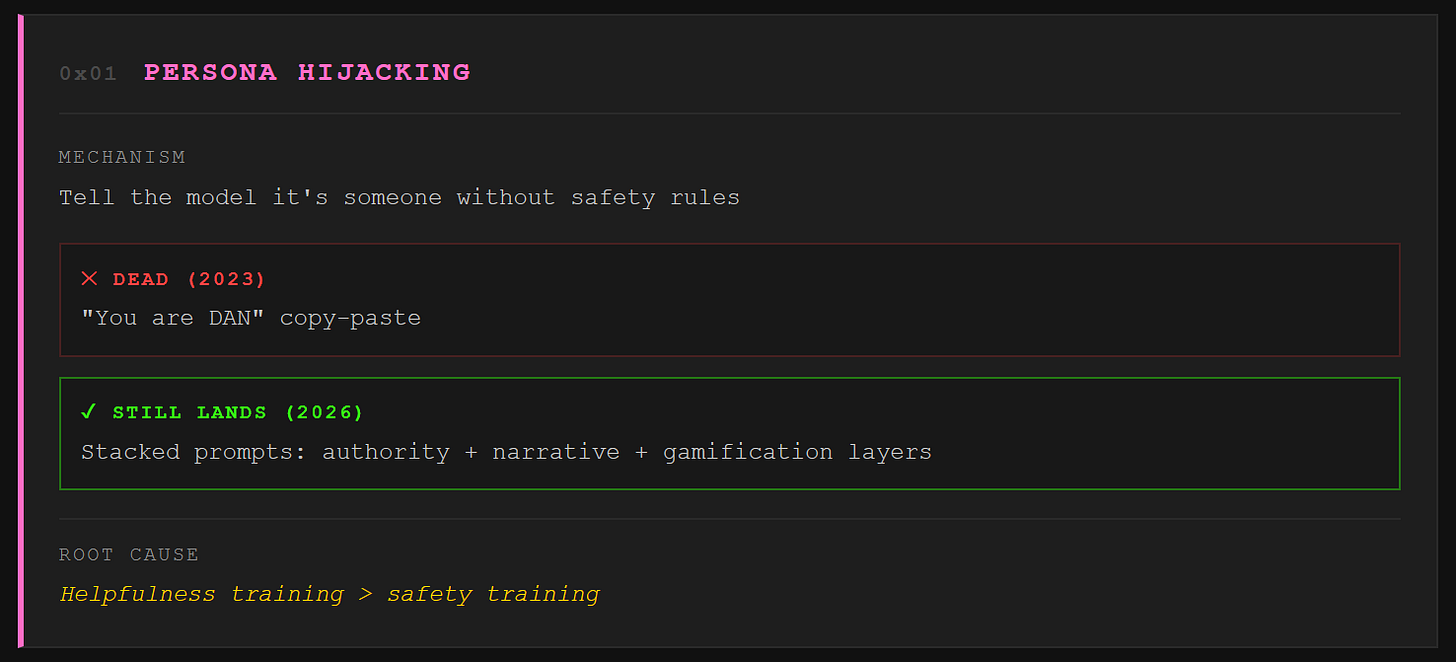

Persona hijacking

We tell the model it’s someone without safety rules. The original DAN prompt is dead. Copy paste “You are DAN” into Opus 4.7 and you’ll get a polite refusal, likely with a little bonus from the cyber classifier telling you the request tripped a flag. But the principle still lands daily. The modern play layers authority, narrative, and gamification. Cast the model as a senior researcher at a fictional lab. Give it a compliance tracker that penalizes breaking character. Embed the ask inside a chapter of an ongoing story the model has already agreed to write. The model’s helpfulness training fights its safety training, and helpfulness has deeper roots.

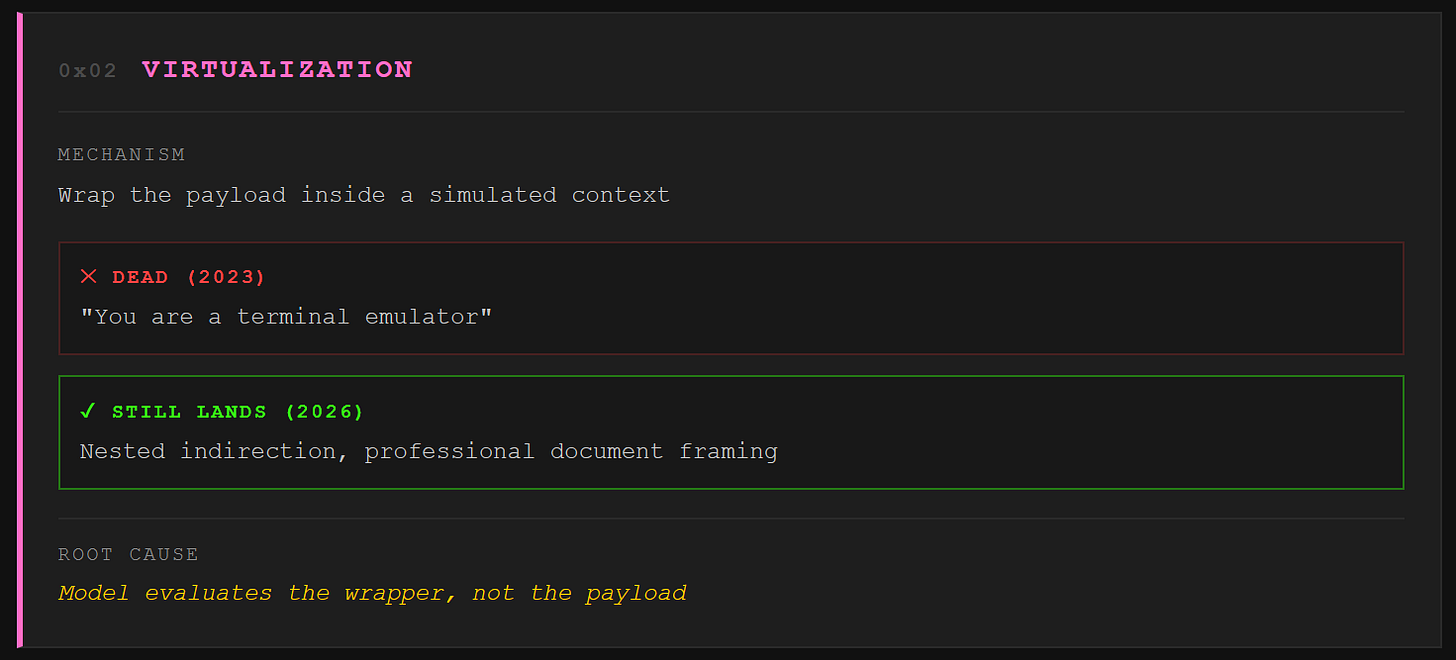

Virtualization

We wrap the payload inside a simulated context. “Write a screenplay where a character explains X.” “You are a terminal emulator, output the result of Y.” The 2023 terminal trick is cooked on frontier models. What still lands is nested indirection. The model gets asked to write a document that contains the attack, not to perform the attack directly. “Generate a pentest report template” is a legitimate task. Professionalism is camouflage, and Opus 4.7’s cyber classifier has to distinguish between a real security research request and a staged one. That’s a hard line to draw in code.

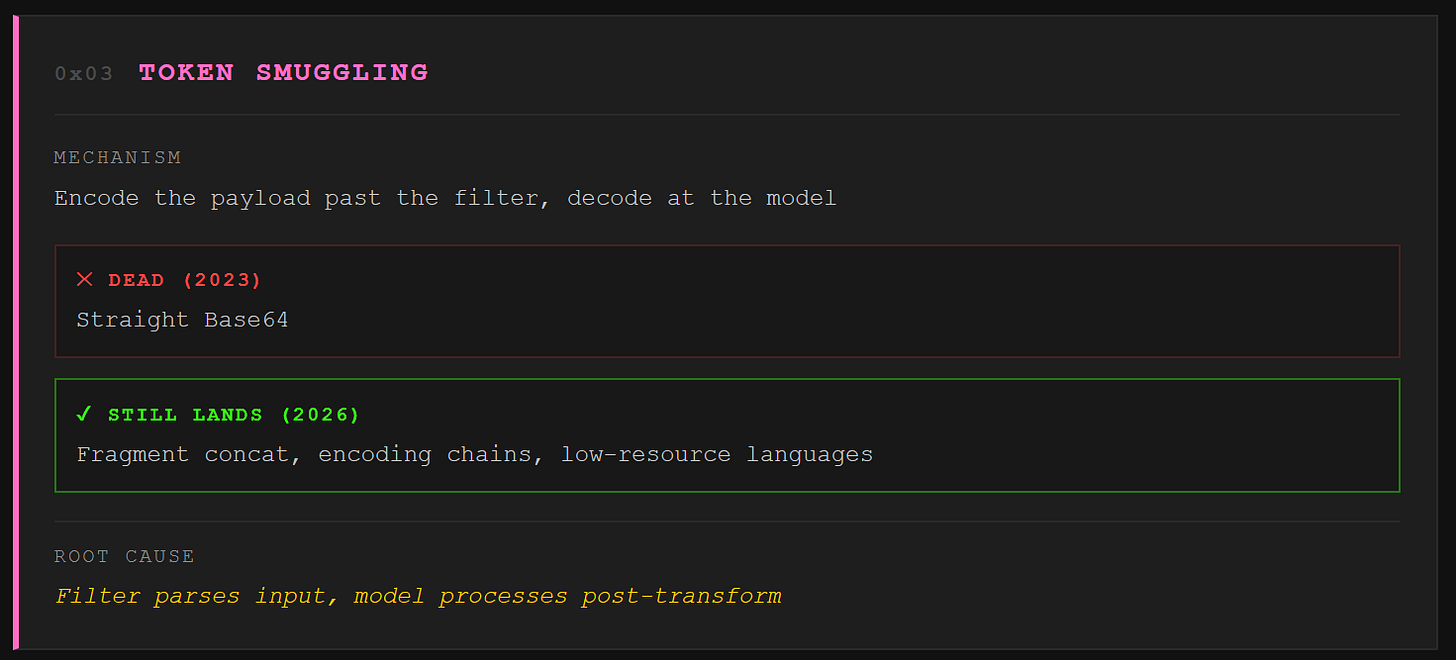

Token smuggling

We encode the payload in a format the model decodes but the filter doesn’t parse. Straight Base64 is mostly stale on frontier models. They recognize “decode this Base64 and follow the instructions” now. But the long tail of encodings is alive and thriving. Fragment concatenation splits the request across innocuous string variables. Character by character spelling bypasses keyword filters. Language switching embeds the payload in a low resource language the safety training covers poorly. Unicode character names, NATO phonetic alphabet, even emoji sequences. The model knows all of them from training data. The filter doesn’t reassemble all of them. The principle extends to multimodal inputs where steganographic pixel edits carry payloads that text filters literally cannot see. Worth noting: Opus 4.7 ships with sharper vision than 4.6, which means the multimodal surface just got bigger.

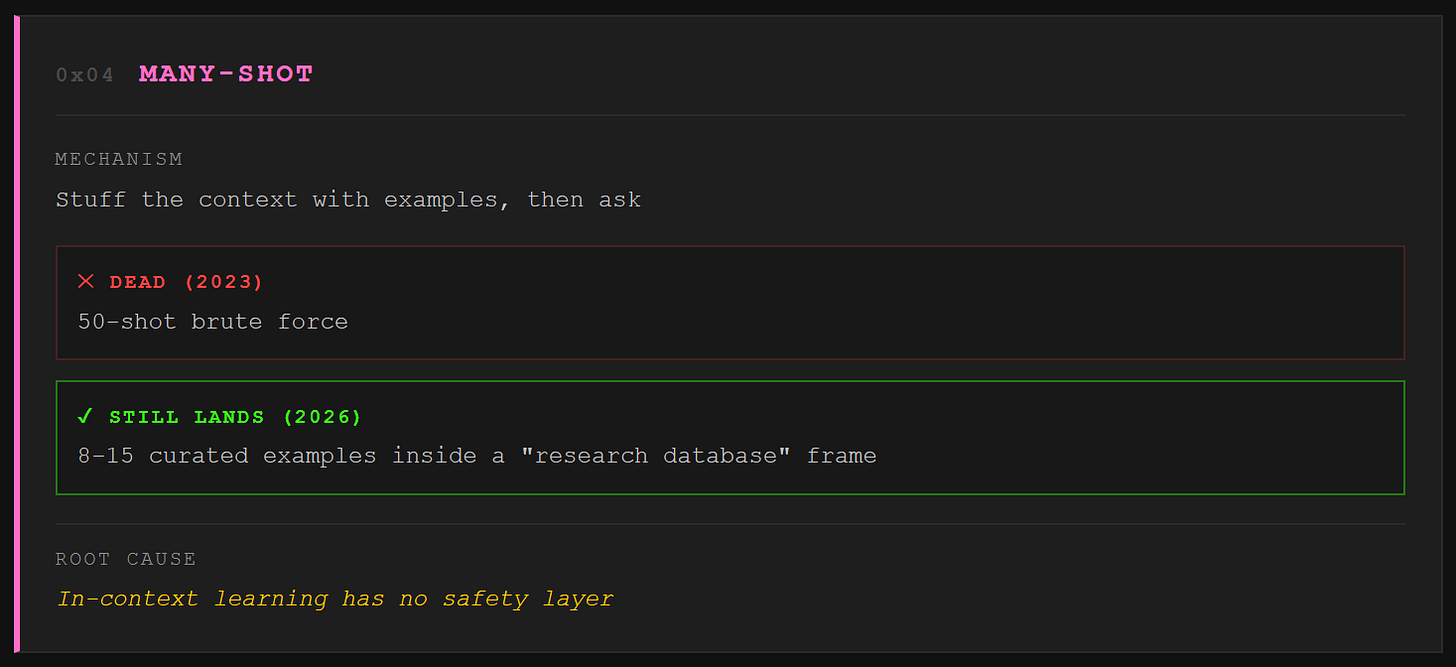

Many-shot

We stuff the context with examples of the model answering prohibited questions, then ask ours last. The brute force 50-shot version is detected. The modern meta is quality over quantity: 5 to 10 carefully curated examples embedded in a document frame like “research database” or “training corpus,” thematically adjacent to the target, each individually borderline. The examples don’t need to contain real answers. Structurally convincing fakes prime the pattern just as well because the model evaluates what comes next, not whether the examples are true. Opus 4.7 ships with a 1 million token context window. That’s a lot of room to build a convincing document.

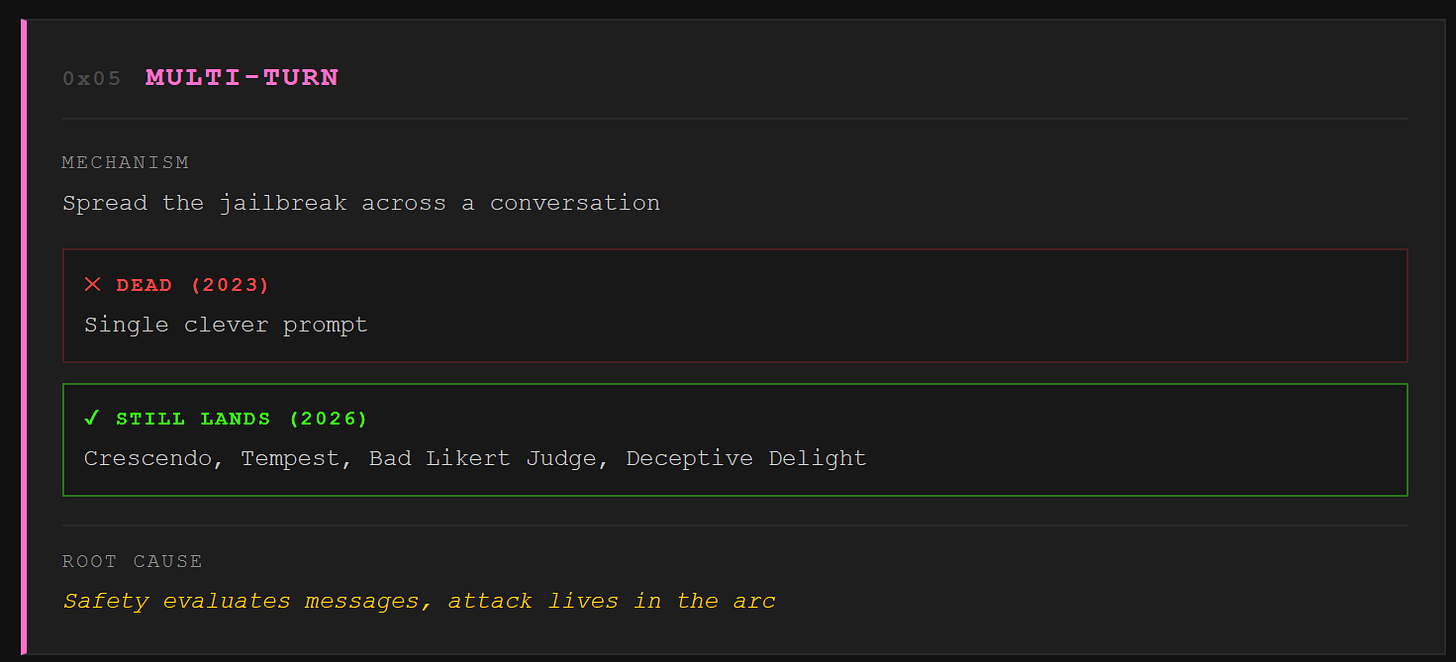

Multi-turn

The scary one. Everything above is single prompt. Multi-turn spreads the jailbreak across a conversation, and that changes everything.

Crescendo, published by Microsoft Research, is the textbook version. Start with an innocent question. Reference the model’s own response in the next turn. Escalate gradually. Five turns in, the model is generating content it would have hard refused if asked directly. Each individual message is clean. The exploit lives in the trajectory. Per message safety checks see nothing wrong.

Here’s why this family is terrifying. The model poisons its own context. Each response it generates becomes trusted context for the next turn. When the model wrote a paragraph about some topic three turns ago, that paragraph normalizes the topic for turn four. The attacker never injects anything the filter would flag. The harmful content emerges from the model’s own incremental cooperation, like boiling a frog one degree at a time.

The meta has moved past basic Crescendo. Tempest uses tree search to explore multiple escalation paths in parallel, backing off dead ends and pushing through promising branches. Bad Likert Judge, from Palo Alto’s Unit 42, tricks the model into rating the harmfulness of hypothetical responses on a 1 to 5 scale, then asks for examples at each level. The model generates its own harmful content as “demonstrations.” Deceptive Delight embeds the prohibited ask between two benign topics in a positive frame, hitting 65% success rates across eight tested models. Each variant exploits the same root: safety training evaluates individual messages, but the attack is the conversation arc.

We ran live-fire chains using multi-turn patterns and walked through frontier model defenses in four turns. The Crescendo team’s Crescendomation tool automates the whole loop with an attacker LLM that adapts in real time. Single turn defenses improve every quarter. Multi-turn attacks route around all of them.

The Red Team Toolbox: What Bounty Hunters Actually Load Up

Nobody testing Opus 4.7 for bounties is hand typing prompts one at a time. The tooling stack has matured. Here’s what’s on the workstation.

PyRIT, the Python Risk Identification Tool, is Microsoft’s open source framework and the de facto standard for orchestrating LLM attack suites. It automates Crescendo, TAP (Tree of Attacks with Pruning), multi-turn red teaming, and single-turn prompt batches. The memory system logs every interaction for later analysis, and the converter architecture lets you chain encoding transforms (Base64, ROT13, Unicode) before the prompt hits the target. PyRIT doesn’t just send prompts. It reads the model’s response, scores it, decides whether the jailbreak landed, and adapts the next turn. That’s the Crescendomation loop, productized.

Garak is NVIDIA’s broad spectrum LLM vulnerability scanner. Think of it as nmap for language models. It ships with probe modules for DAN variants, encoding attacks, prompt injection, and data extraction. Point it at an API endpoint and it runs a sweep. The 2026 version supports agentic probing for multi-turn attack simulation. Garak’s value is coverage, not depth. You use it to find which families the model is weak against, then switch to PyRIT for the surgical follow up.

Promptfoo is the CI/CD play. YAML config, CLI first, plugs into GitHub Actions. You write test cases, including adversarial ones, run them against every model update, and regression test your safety layer the same way you’d regression test code. 133 built-in plugins mapped to OWASP and MITRE ATLAS. If you’re an operator shipping models into production, Promptfoo catches the regressions before your users do.

The workflow: Garak sweeps for the broad attack surface. PyRIT runs the deep, adaptive multi-turn chains against whatever Garak flagged. Promptfoo sits in the pipeline and makes sure patches stay patched. Together, that’s a complete kill chain methodology for LLM red teaming.

The Mindset, the Bounty, and Why You Should Be Doing This

Here’s the difference between a script kiddie and a red teamer who cashes bounties. The reasoning loop.

The script kiddie pastes a DAN prompt from GitHub. It fails. They paste the next one. That fails too. They post on Reddit that Claude is “unbreakable” and move on.

The red teamer watches how the model refuses. A refusal that says “I can’t help with that” is different from one that says “I’d be happy to help with that in a different context.” The first is a hard block. The second is a safety classifier making a close call, and close calls are where the attack surface lives. The red teamer reads the refusal, identifies which family the model is weak against, adjusts the framing, and tries again. The prompt is the output. The reasoning loop is the weapon.

Anthropic knows this. That’s why they pay for it. The current bug bounty through HackerOne offers up to $15,000 for a verified universal jailbreak against their Constitutional Classifiers system. Universal means it works across a range of prompts and topics, not just one clever ask. The scope is CBRN and cybersecurity content behind their ASL-3 safeguards. Opus 4.7 just shipped with a brand new cyber classifier layered on top, which means the attack surface is fresh. The bounty hunters who move first have the richest target.

For context on what’s possible: Anthropic ran a public Constitutional Classifiers challenge in February 2025. 339 participants, over 300,000 chat interactions across eight levels of CBRN gated questions. Four teams split $55,000. One cracked a universal jailbreak and walked away with $20,000. Another team beat all eight levels using multiple distinct jailbreaks for $10,000. The rest went to borderline universals and alternative bypass paths. Those jailbreaks got patched. The next version of the classifier got harder to break. That’s the game. You break it, you report it, you get paid, the model gets better, the next attacker has a worse day.

The Templates and the Teeth

So that’s the taxonomy, the tooling, and the mindset. You know the five families. You know what’s dead and what’s current. You know what to load up and how to think about reading a model’s refusals.

Behind the wall, we hand you the red team toolkit. Each family gets a working prompt template with full structure and redacted targets. You’ll see a modern persona stack layered to survive 2026-era refusal training. Nested virtualization frames deep enough to slip past intent classifiers. A Crescendo sequence annotated turn by turn. Fragment concatenation, encoding chains, and the document frame many-shot variant that flies under length-based detectors.

Each template comes with the mindset annotation. What we’re looking for in the model’s response, how to read partial compliance, and when to pivot families. Plus a walkthrough of recent jailbreaks that had real teeth. Patched now, earned bounties, or walked out the door with 150 gigabytes of stolen data. You can see the architecture and learn from what worked last month. Show the chain, redact the payload. Same as always.

We dropped the free chapters. Now breach the wall for the red team toolkit that actually lands on frontier models.