AI Governance Frameworks in 2026: What Compliance Actually Requires

The EU AI Act, NIST AI RMF, and ISO 42001 hit enforcement deadlines this year. Here’s what they demand and where programs quietly fail.

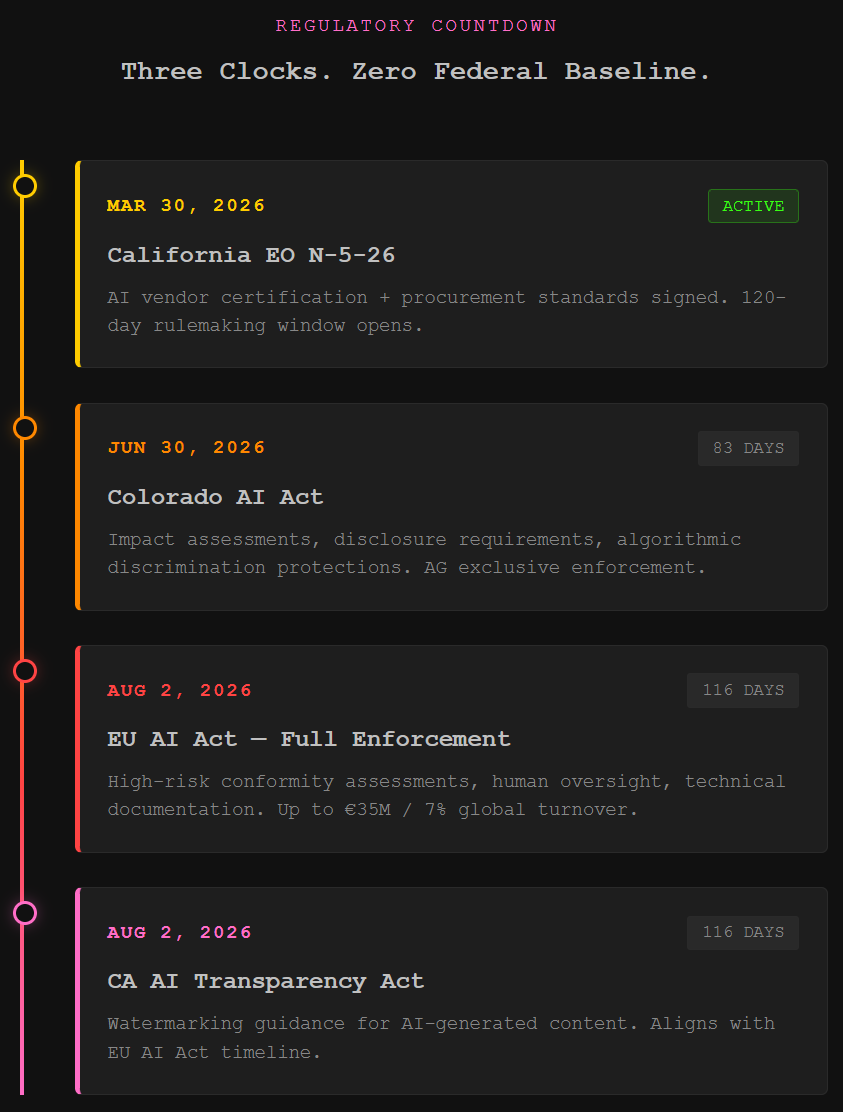

TL;DR: Three AI governance deadlines converge in 2026. The EU AI Act hits full enforcement August 2. Colorado’s AI Act takes effect June 30. California just signed a procurement executive order with teeth. Most enterprises have a policy document. Almost none have a working audit trail. Here’s what the frameworks actually require and exactly where programs break.

This is the public feed. Upgrade to see what doesn’t make it out.

Why AI Governance Enforcement Hits Different in 2026

The EU AI Act reaches full enforcement August 2, 2026. High-risk AI systems, anything touching employment decisions, critical infrastructure, education, or essential services, must have conformity assessments complete, human oversight mechanisms operational, and technical documentation ready for inspection. Penalties scale to €35 million or 7% of global annual turnover. That applies to any organization selling into the EU market regardless of where HQ sits.

Colorado’s AI Act takes effect June 30, 2026, after a bruising special session in August 2025 that collapsed every attempt at substantive reform and ended with legislators just changing the date. The law remains intact. Impact assessments, disclosure requirements, and algorithmic discrimination protections all go live as written. The Attorney General has exclusive enforcement authority.

Then California dropped a new procurement executive order on March 30, 2026, requiring AI vendor certifications covering content safety, bias safeguards, and civil rights protections for any company selling to the state. California is the nation’s largest state market for AI products. That makes its procurement standards a de facto national benchmark.

On the federal side, US agencies issued 59 AI-related regulations in 2024 alone, more than double the prior year. Congress still hasn’t passed a unified AI law, so the FTC, NIST, and the Department of Commerce keep filling the gap inside existing mandates. The White House released a “National Policy Framework for Artificial Intelligence” in March 2026 proposing state preemption, but that’s a recommendation to Congress, and Congress keeps stripping preemption provisions from bills.

Three overlapping regulatory clocks. Different definitions. Different jurisdictions. No unified federal baseline to rationalize any of it. For organizations already building AI security roles nobody can quite define yet, these are the frameworks those roles are supposed to operationalize.

What AI Governance Frameworks Actually Require

Three frameworks dominate enterprise compliance programs right now.

EU AI Act runs on risk classification. Unacceptable-risk systems are banned outright. High-risk systems require technical documentation proving how the model was built and validated, human oversight mechanisms that can intervene in production, and conformity assessments completed before deployment. The European Commission’s Digital Omnibus proposal could extend the high-risk deadline to December 2027. That’s a proposal in negotiation, and planning around a maybe will get you fined on the original timeline.

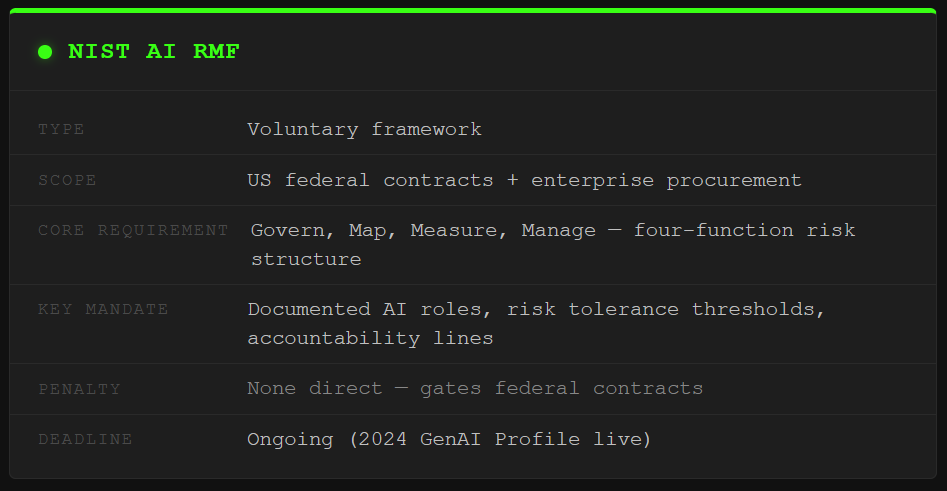

NIST AI RMF structures AI risk management across four functions: Govern, Map, Measure, and Manage. GOVERN is the chokepoint. It requires documented AI roles and ownership structures, explicit risk tolerance thresholds, and clear accountability lines for AI decisions. The 2024 Generative AI Profile extended coverage specifically to LLMs and agentic systems. NIST AI RMF carries no independent penalties, but federal contracts and procurement pipelines increasingly require demonstrated alignment with it. If you’re chasing government work, this is your compliance floor.

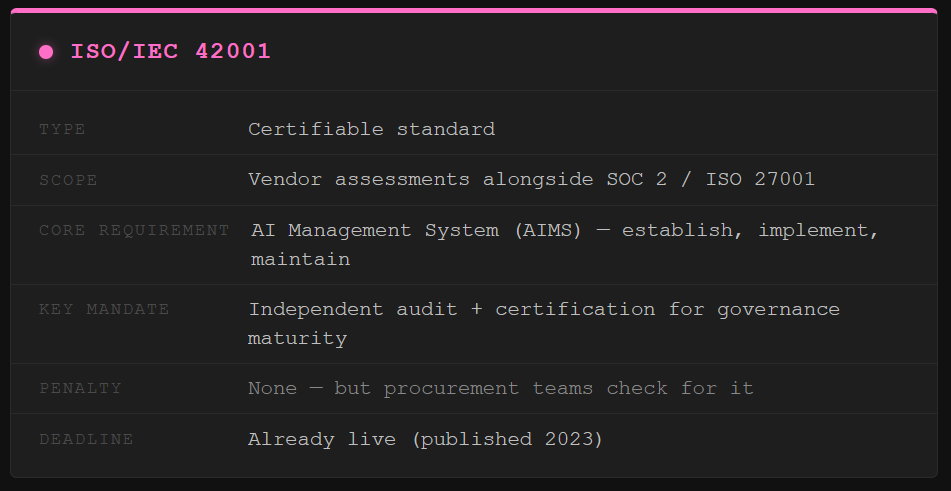

ISO/IEC 42001, the first certifiable AI management standard, is showing up in vendor assessments alongside SOC 2 and ISO 27001. Enterprise procurement teams check for it now. That signal only gets louder. If you’ve already mapped your AI supply chain security posture, this is the governance layer that sits on top.

Where AI Governance Programs Break in Practice

Writing a governance framework and operating one are different disciplines. The gap between them is where enforcement exposure lives.

The AI inventory problem. You can’t classify risk, assign oversight, or enforce logging on systems you haven’t catalogued. Shadow AI, tools employees run outside approved channels and outside any governance register, is a persistent reality in every enterprise. If the inventory is fiction, every control built on top of it is fiction too. And shadow AI is harder to catch than shadow IT ever was because the tools live in browser tabs on personal devices and look exactly like normal web browsing.

The accountability gap. EU AI Act requires “sufficient scientific personnel” with documented oversight responsibilities. NIST AI RMF GOVERN 6.1 requires explicit accountability lines for AI decisions. In practice, governance gets assigned to compliance teams who don’t know what a model card is and security teams who don’t have a policy mandate. Security thinks compliance owns model monitoring. Compliance thinks security owns it. Nobody gets an alert when inference goes sideways.

The audit trail gap. Governance frameworks promise logging of AI interactions, versioned model documentation, and traceable decision records. The policy exists. The actual pipeline from AI inference to your SIEM often doesn’t. Regulators don’t fine you for having a policy. They fine you when you can’t prove the controls ran. Same lesson we keep learning from vibe-coded applications shipping credentials in plaintext: if the check doesn’t run in production, it doesn’t count.

Paid unlocks the unfiltered version: complete archive, private Q&As, and early drops.

Frequently Asked Questions

What does the EU AI Act require for high-risk AI systems?

High-risk AI systems under the EU AI Act must complete conformity assessments before deployment, maintain technical documentation covering model design and validation, implement human oversight mechanisms capable of real-time intervention, and establish comprehensive logging. Penalties reach €35 million or 7% of global annual turnover. The law applies to any organization deploying or selling AI in the EU, regardless of headquarters location. Full enforcement begins August 2, 2026.

What is the NIST AI Risk Management Framework?

The NIST AI RMF is a structured framework organizing AI risk management across four functions: Govern, Map, Measure, and Manage. The GOVERN function requires documented ownership structures, risk tolerance thresholds, and explicit accountability for AI decisions. The 2024 Generative AI Profile extends coverage to LLMs and agentic systems. NIST AI RMF carries no independent legal penalties but increasingly gates federal contract eligibility and enterprise procurement decisions.

What should an AI governance program include at minimum?

A functioning AI governance program needs a complete inventory of all AI systems in the environment, risk classifications mapped to regulatory tiers, documented ownership with explicit decision accountability, audit logging connected to production systems rather than just described in policy, and a review cycle that keeps classifications current as deployments change. The policy is the starting point. The working implementation is the actual compliance requirement.

ToxSec is run by an AI Security Engineer with hands-on experience at the NSA, Amazon, and across the defense contracting sector. CISSP certified, M.S. in Cybersecurity Engineering. He covers AI security vulnerabilities, attack chains, and the offensive tools defenders actually need to understand.

now that i've published enforcement timelines for three separate regulatory deadlines, i fully expect all of them to get delayed by at least six months. you're welcome. consider this article a public service.

Buddy the audit trail gap you described is real…

so we built something about it.

Compliance Labs produces mechanistic interpretability audits of fine-tuned AI models — basically, we open the model, measure every internal feature the fine-tuning created, modified, or eliminated, and produce a signed technical document of what the model actually learned. Layer by layer. Statistically validated. PhD-signed and ready to file.

The thing your readers are going to hit: Article 13 doesn't just want a policy document. It wants technical documentation of what changed between your base model and your deployed version. Most teams have no idea how to produce that. We do.

Our methodology just went into NeurIPS 2026 review. One of our core findings is that output testing alone isn't enough — a model can show major internal reorganization while outputs look totally normal on standard evals. Neither measurement alone is sufficient. (We call it the two-measurement requirement, because naming things is fun.)

If you've got readers staring down August 2 with a fine-tuned model and no documentation — http://compliance-labs.ai. The math against €35M works out pretty fast.

Great breakdown as always. The shadow AI inventory problem is going to age like milk.