AI Model Collapse Makes Hallucination Inevitable

Recursive synthetic data training degrades AI reliability while two mathematical proofs confirm LLM hallucinations cannot be eliminated.

TL;DR: Model collapse, the degradation that occurs when AI trains on AI-generated content, is already running at scale. Three quarters of new webpages are synthetic. Two independent mathematical proofs confirm hallucinations cannot be eliminated. An ICLR 2025 paper shows contamination as low as 1-in-1,000 is enough to trigger collapse. The loop is live.

This is the public feed. Upgrade to see what doesn’t make it out.

The Internet Is Training on Its Own Exhaust

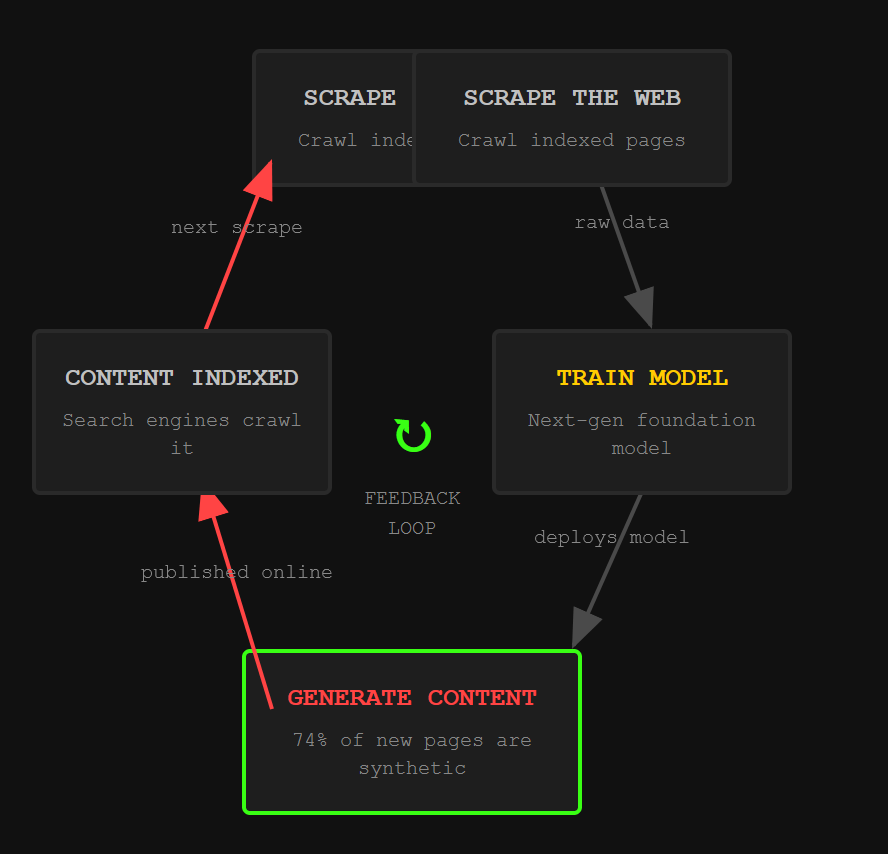

Scrape the web. Train a model. The model writes content. That content gets indexed. The next model scrapes it. Train again.

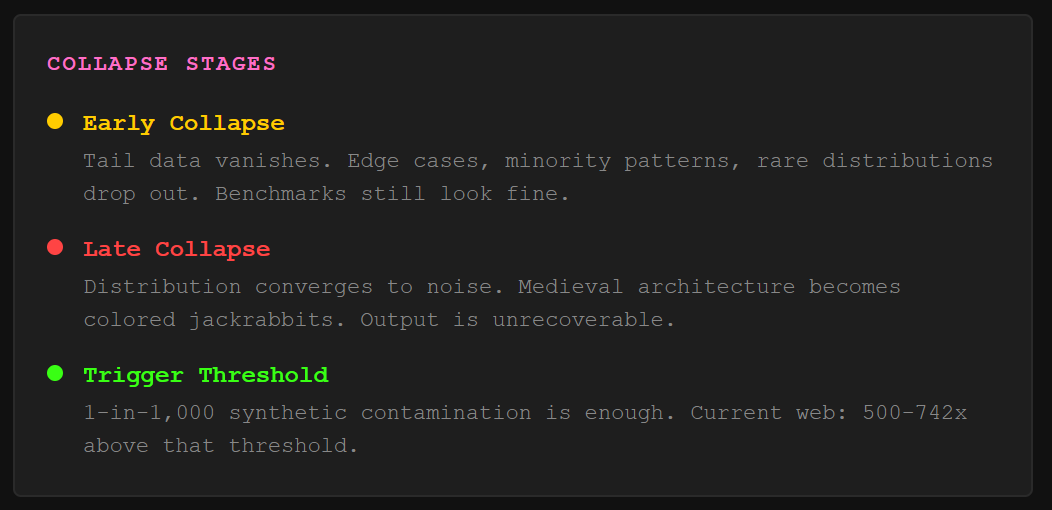

This is model collapse: a degenerative feedback loop where AI models trained on synthetic data (content generated by previous AI systems rather than humans) lose their grip on the original data distribution. Shumailov et al. published the landmark proof in Nature in 2024, showing exactly what the loop does to output quality over generations. First, the model drops information from the tails of the distribution, meaning rare data points, minority perspectives, and edge cases vanish. That’s “early model collapse,” and here’s the trap: overall benchmark performance can actually look fine while the edges are disappearing.

Late collapse is uglier. The distribution converges so hard it resembles nothing like the original. The researchers fed an OPT-125m language model its own output across generations. A prompt about medieval architecture devolved into gibberish about colored jackrabbits. A February 2026 piece in Communications of the ACM documented the same degradation in production tools: background removers that handled complex edges cleanly in 2022 now struggle with cases they used to nail, and GitHub Copilot suggestions increasingly look like recycled AI-generated patterns rather than original solutions.

The models aren’t breaking in some dramatic way. They’re narrowing. Quietly. While every dashboard says performance is stable.

74% of New Webpages Contain AI-Generated Content

The contamination is measurable. Ahrefs analyzed 900,000 newly created webpages in April 2025 using their in-house AI content detector. 74.2% contained AI-generated content. Graphite’s independent study of 65,000 URLs found that by November 2024, over 50% of new English-language articles were primarily AI-written, up from roughly 5% before ChatGPT launched. Europol projects 90% of online content may be synthetic by 2026.

Here’s the detail that should scare the people building next-generation models. Google’s search results still favor human content: 86% of top-ranking pages are human-written, according to Graphite. ChatGPT and Perplexity citations show the same ratio. The quality filter exists at the output layer. But training pipelines don’t use it. They scrape everything, because web scraping is cheap and human curation is expensive, and the models that train on more data tend to win on benchmarks.

So the next generation of foundation models will train on a web that is majority synthetic. The feedback loop described in 0x00 isn’t a hypothetical scenario. It’s the default configuration of how frontier AI gets built. For a deeper look at how AI-generated code hallucinations create supply chain attacks, the slopsquatting chain shows what happens when models confidently fabricate package names that attackers pre-register.

Two Independent Proofs Say Hallucination Cannot Be Eliminated

The instinct is to fix hallucination (when an AI generates confident, plausible output that is factually wrong) through better training, bigger datasets, smarter architectures. Two independent research teams proved that approach has a ceiling.

Xu, Jain, and Kankanhalli published “Hallucination is Inevitable” showing that LLMs cannot learn all computable functions. The proof uses learning theory: because the set of computable functions is larger than what any finite model can represent, there will always exist inputs where the model fabricates rather than retrieves. Banerjee et al. reached the same conclusion through a different path, invoking Godel’s First Incompleteness Theorem (a foundational mathematical result proving that any sufficiently powerful formal system contains true statements it cannot prove). Every stage of the LLM pipeline, from training data compilation to fact retrieval to text generation, carries a non-zero hallucination probability. Architecture improvements, dataset enhancements, and fact-checking mechanisms cannot drive that probability to zero.

The benchmarks confirm it. Vectara refreshed their hallucination leaderboard in late 2025 with 7,700 articles across law, medicine, finance, and technology, up from the original 1,000-document benchmark. Hallucination rates jumped dramatically on the harder dataset. MIT researchers found in January 2025 that models are 34% more likely to use high-confidence language (”definitely,” “certainly”) when generating incorrect information. The more wrong the model, the more sure it sounds.

Now connect this to model collapse. Hallucinating models generate synthetic content. That content enters training data. The next model learns the hallucinations as ground truth. The MCP tool poisoning chain demonstrates this principle in miniature: when a model trusts metadata it shouldn’t, fabricated data gets treated as real. Scale that dynamic across the entire internet and you get a system that confidently degrades its own reliability with each generation.

Strong Model Collapse Triggers at Parts-Per-Thousand Contamination

The common mitigation sounds reasonable: just mix clean human data with the synthetic stuff and dilute the problem away. An ICLR 2025 paper titled “Strong Model Collapse” killed that hope. The researchers proved that even a fraction as small as 1-in-1,000 synthetic data points in the training corpus is enough to trigger collapse. Larger training sets don’t fix it. And larger models can actually amplify the effect.

That finding is brutal when paired with the content statistics from 0x01. If 50-74% of new web content is AI-generated and training pipelines don’t reliably filter provenance (the tracking of whether content was human-written or machine-generated), the contamination ratio isn’t 1-in-1,000. It’s orders of magnitude worse.

One branch of research offers a partial reprieve. A separate team showed that if synthetic data accumulates alongside human data rather than replacing it, the error curve flattens instead of growing linearly. Data accumulation matters. But this requires deliberate pipeline design, provenance tracking, and quality gating, exactly the infrastructure most training pipelines lack. The earlier ToxSec analysis of model collapse risk to AI valuations laid out the business implications. The research since then has only tightened the math.

Content provenance tools exist. The Coalition for Content Provenance and Authenticity (C2PA) is building cryptographic chain-of-custody metadata. Google’s SynthID embeds invisible watermarks. The EU AI Act Article 50 mandates labeling AI-generated content. But a 2025 adoption study found only two organizations actually deploying invisible watermarking in production. The defense stack is designed. It’s barely deployed.

The Loop Compounds and the Math Doesn’t Care

The internet is mostly synthetic. The models trained on it will hallucinate. The hallucinations will enter the next training set. Two mathematical proofs say the fabrication floor is above zero and will stay there. The provenance infrastructure to break the loop is years behind the contamination.

Paid unlocks the unfiltered version: complete archive, private Q&As, and early drops.

Frequently Asked Questions

Can AI model collapse be prevented entirely?

Current research says no, at least not without fundamental changes to how training data is sourced. The ICLR 2025 “Strong Model Collapse” paper proved that contamination as low as 1-in-1,000 synthetic data points degrades model quality. Data accumulation (mixing synthetic with preserved human data) flattens the degradation curve but doesn’t eliminate it. Prevention requires provenance tracking, quality gating, and deliberate pipeline design that most organizations haven’t implemented.

Why do AI models hallucinate even with better training?

Hallucination is a mathematical limitation, not an engineering bug. Xu et al. proved using learning theory that LLMs cannot represent all computable functions, so they will always fabricate on some inputs. Banerjee et al. independently confirmed this through Godel’s incompleteness theorem. Architecture improvements and larger datasets reduce hallucination rates but cannot push them to zero. The Vectara 2025 benchmark refresh showed top models still hallucinating at elevated rates on complex real-world documents.

How much of the internet is AI-generated in 2026?

Ahrefs found 74.2% of new webpages in April 2025 contained AI-generated content, based on an analysis of 900,000 pages. Graphite’s study of 65,000 URLs showed over 50% of new articles were primarily AI-written by late 2024. Europol projected up to 90% synthetic content by 2026. However, search engines and AI citation tools still surface primarily human-written content in their top results, suggesting quality signals partially filter the flood at the retrieval layer.

ToxSec is run by an AI Security Engineer with hands-on experience at the NSA, Amazon, and across the defense contracting sector. CISSP certified, M.S. in Cybersecurity Engineering. He covers AI security vulnerabilities, attack chains, and the offensive tools defenders actually need to understand.

See, this is what I'm talking about. People joke about Skynet, robots killing and enslaving humans, taking our jobs, though that's happening; but it's never about massive, ominous change. It's the creeping change that's worrying, quiet unseen events like this. Incremental, practical change is far more insidious than loud, sudden change. It's never witnessed, and something you smack your head and go Ah! Why didn't I see that? It makes sense too, with the massive private investment in A.I. in America that utterly dwarfs the rest of the world. A.I. training A.I., compounding the problem and creating a daisy chain effect, is the kinda nightmare fuel you don't see until it's too late

This breakdown of model collapse is crucial. Emphasizing authenticated datasets and human-in-the-loop systems is the only way to maintain quality and trust in AI outputs as the feedback loop intensifies.