The Dead Internet Is No Longer a Theory

How 51% automated traffic, 74% AI-generated pages, and coin-flip detection accuracy killed the authentic web

TL;DR: Bots crossed 51% of web traffic in 2024. Three-quarters of new pages contain AI content. Humans detect synthetic text at coin-flip accuracy. The authenticity problem is measurable, accelerating, and compounding through model collapse. Here’s what the data actually says.

This is the public feed. Upgrade to see what doesn’t make it out.

How Bad Is Bot Traffic in 2025?

The Imperva 2025 Bad Bot Report dropped a number that should have made more noise: 51% of all web traffic in 2024 was automated. Humans became the minority online for the first time in a decade. Of that total traffic, 37% qualified as “bad bots”: systems built for scraping, credential stuffing, impersonation, and spam. That’s the sixth consecutive year of growth, and Imperva blocked 13 trillion bad bot requests across thousands of domains in 2024 alone.

The content side tracks the same curve. Graphite analyzed 65,000 web articles through May 2025 and found 50.3% of newly published articles were primarily AI-generated. Before ChatGPT launched in late 2022, that number was 5%. Ahrefs ran a separate crawl of 900,000 new pages from April 2025 and pegged the number higher: 74.2% contained AI-generated content. Only 2.5% was pure slop with zero human editing. The rest lives in a gray zone of human-AI hybrid content that makes classification almost meaningless.

The dead internet theory first surfaced on forums around 2021. The conspiratorial framing (governments orchestrating all of it) remains unproven. The core empirical claim, that bots and synthetic content dominate the web, is just observable data at this point.

Why Can’t Anyone Detect AI-Generated Text?

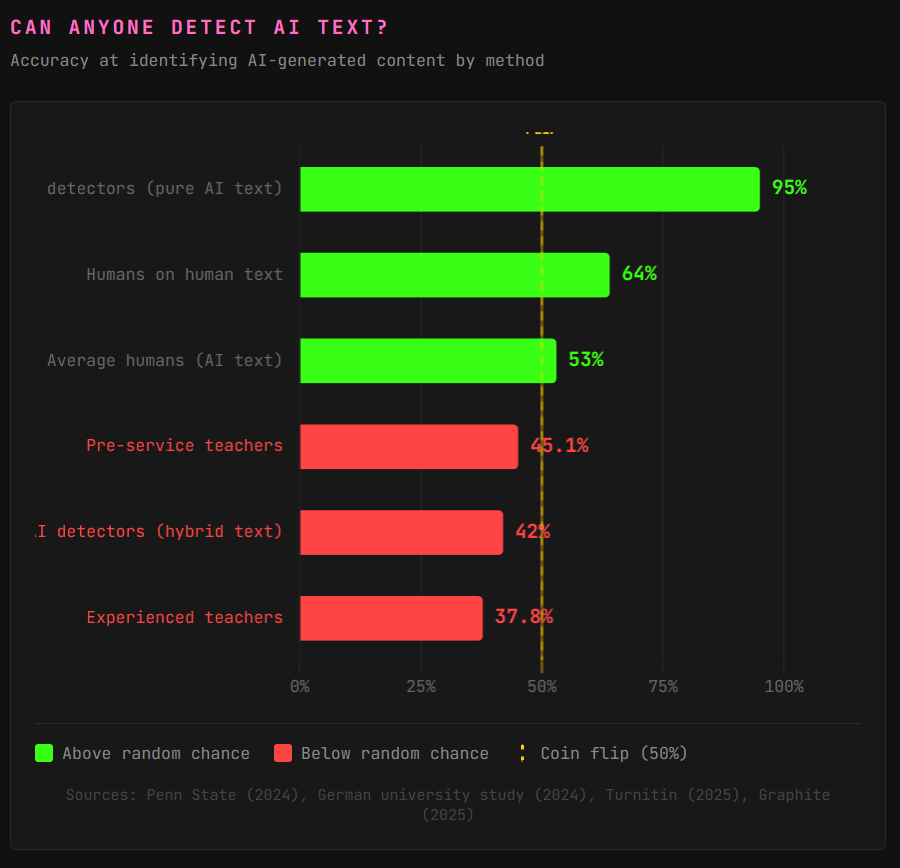

Penn State researchers found humans distinguish AI-generated text about 53% of the time. Random guessing is 50%. A German university study landed on similar numbers: 57% accuracy on AI texts, 64% on human texts. Professional-level AI writing? Less than 20% of participants classified it correctly.

Teachers, the people you’d expect to be best at this, fare worse. Pre-service teachers correctly identified 45.1% of AI-generated essays. Experienced teachers hit 37.8%. That’s below random chance. Confidence didn’t help either. Even when participants felt sure, they were frequently wrong. The models were trained on human writing, so the better the model gets, the better the mimicry, and the worse human detection performs.

Detection tools sound better on paper. The best ones claim 90-99% accuracy on unmodified AI text, and Turnitin reports 1-2% false positive rates. But on hybrid content, where a human edits AI output, accuracy craters to the 20-63% range. AI humanizer tools (software that rewrites AI text until detectors can’t flag it) exist as commercial products. Paraphrasing alone drops detection accuracy by 20% or more. The generators will always outpace the detectors because the attack surface is asymmetric: defenders must catch everything, attackers only need to evade once.

Model Collapse Turns the Dead Internet Into a Feedback Loop

Here’s where the problem goes recursive. A 2024 Nature study by Shumailov et al. demonstrated that AI models trained on AI-generated data suffer “irreversible defects.” They called it model collapse: each generation of a model trained on its predecessor’s output loses information from the tails of its training distribution. Rare patterns vanish first. Outputs converge toward a bland, homogenized average. Researchers sometimes call this “Habsburg AI,” a reference to what happens when a gene pool closes.

The web is the primary training corpus for large language models (LLMs: the AI systems behind ChatGPT, Claude, and similar tools). As synthetic content floods the web, it enters the next model’s training data. That model produces more synthetic content. That content enters the next training cycle. The snake eats its own tail.

There’s a counterpoint worth tracking. Graphite found that 86% of articles ranking in Google’s top results are still human-written, and 82% of content cited by ChatGPT and Perplexity comes from human authors. Search engines and AI assistants are filtering toward quality for now. But that filter depends on distinguishing human from synthetic, and the detection data above shows that capability is degrading. In September 2025, OpenAI CEO Sam Altman posted on X that he “never took the dead internet theory that seriously” until he noticed how many LLM-run accounts populated the platform. When the CEO of the company making the bots notices, that’s the signal.

Paid unlocks the unfiltered version: complete archive, private Q&As, and early drops.

Frequently Asked Questions

Where does the “90% AI content by 2026” prediction come from?

The Europol Innovation Lab published a 2022 report stating experts estimated 90% of online content could be synthetically generated by 2026. Reason Magazine traced that number to its source: Nina Schick’s 2020 book, which cited one person, Victor Riparbelli, CEO of Synthesia, a company that sells synthetic media. One executive with a direct financial interest in hyping synthetic content, laundered through a book, into a law enforcement report, into every conference deck since. The trajectory of AI content growth is real and measurable, but that specific 90% number rests on thinner sourcing than most people realize.

Can search engines filter out AI-generated content?

Partially, for now. Graphite’s 2025 data shows 86% of top-ranking Google results are still human-authored, and AI-generated articles tend to rank lower when compared against human content targeting the same keywords. Google’s 2025 Search Quality Rater Guidelines instruct raters to apply the lowest quality score when a page is almost entirely AI-generated. But filtering requires detection, and detection accuracy degrades every time models improve. The gap between what gets published and what ranks is a temporary buffer.

Is the dead internet theory actually proven?

The conspiratorial version, that governments secretly orchestrated the takeover of the internet with bots starting around 2016, has no supporting evidence. The empirical version, that bots and AI-generated content now constitute the majority of web traffic and newly published pages, is backed by independent datasets from Imperva, Graphite, and Ahrefs. The theory’s name oversells it. The data underneath does not.

ToxSec is run by an AI Security Engineer with hands-on experience at the NSA, Amazon, and across the defense contracting sector. CISSP certified, M.S. in Cybersecurity Engineering. He covers AI security vulnerabilities, attack chains, and the offensive tools defenders actually need to understand.

🌍 ☠️

Actually, the Internet’s been dead for a while. Only now with AI are they livening it up a bit