What is Slopsquatting? AI Hallucinations Ship Malware

Attackers pre-register the fake package names AI coding tools invent, then wait for the copy-paste. slopcheck blocks it at the install boundary.

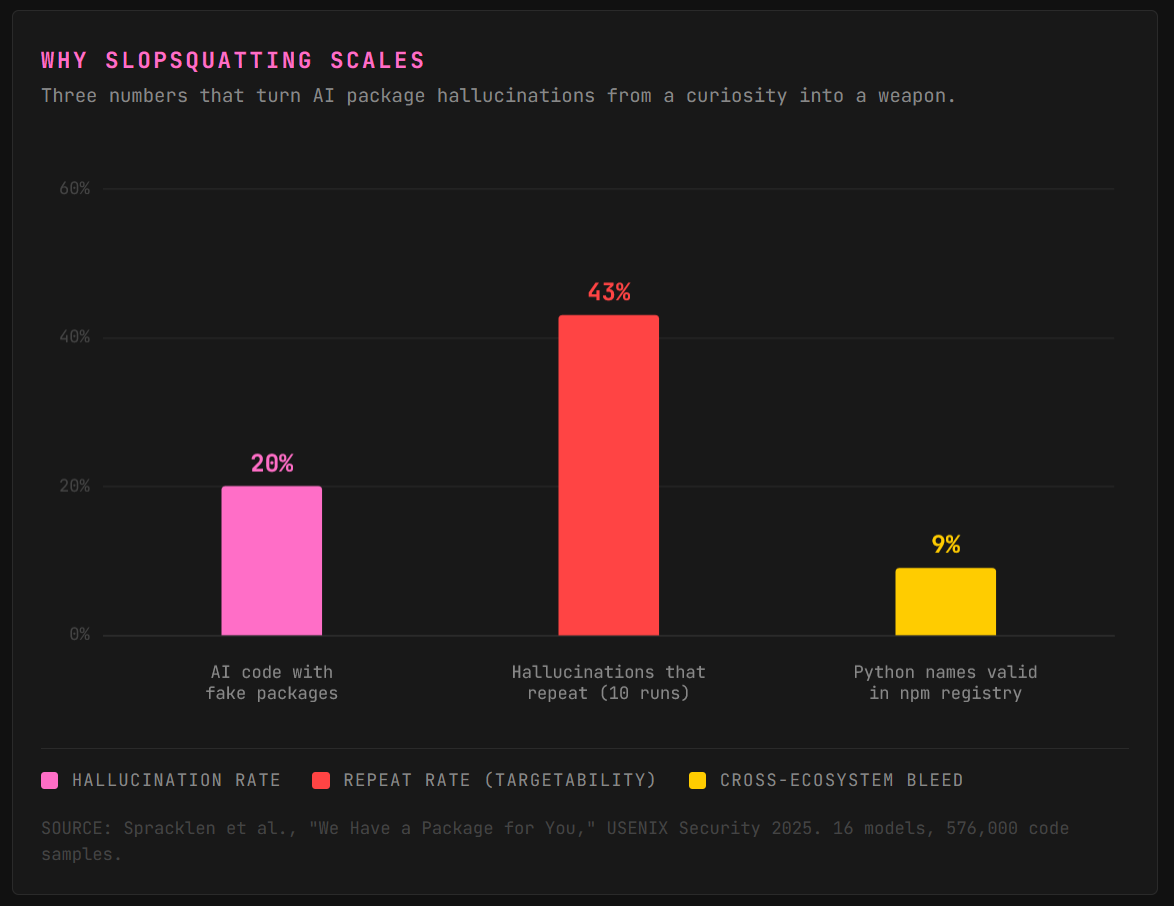

TL;DR: AI coding assistants recommend packages that don’t exist. Attackers claim those hallucinated names on PyPI and npm, load them with malware, and wait for the copy-paste. Nearly 20% of AI-generated code samples reference fake packages. 43% of those fakes repeat on every single run. The attack surface is predictable, scalable, and already burning through the wild. slopcheck blocks it at the install boundary.

Karen Spinner is joining me for this one. She’s taking slopcheck out for a spin and showing you what it looks like from the chair of someone who codes with AI assistants daily. Her two sections live inside the piece. I handle the attack chain.

What Is Slopsquatting

Package managers are the plumbing nobody wants to write twice. You run pip install something, the package drops into your project, off you go. The whole ecosystem runs on trust: you type a name, you get the code, you ship.

Now wire an AI coding assistant into the workflow. You ask Claude or Copilot for code that talks to a new API. It spits out pip install huggingface-cli alongside a working snippet. Most devs trust the recommendation. They run the command.

Here’s the problem. The AI never checked whether that package exists on the registry. It predicted a plausible-sounding name from statistical patterns in its training data. Sometimes the name is real. Sometimes it’s a ghost.

Slopsquatting is what happens when an attacker claims that ghost first. Register the hallucinated name on the public registry. Wire up a functional-looking README and version history. Drop a malicious install hook into the setup script. Wait.

The dev who copy-pastes the AI’s install command runs the attacker’s payload the moment pip install finishes. Seth Larson of the Python Software Foundation named the attack in April 2025. Slop, as in low-quality AI output. Squatting, as in claiming a name for hostile purposes. It sits inside a broader pattern of AI coding tool failures we’ve already walked through, alongside hardcoded secrets and broken auth.

Why AI Coding Tools Hallucinate Packages

Typosquatting waits for a human to mistype a name. The attacker registers `reqeusts`, hopes someone fat-fingers the real one, and lives off the misfires. Slopsquatting skips the human error entirely. The AI generates the mistake, the attacker harvests it.

Sixteen code-generating models tested across 576,000 samples in the 2025 USENIX Security paper We Have a Package for You. Nearly 20% of AI-generated code referenced packages that don’t exist. The fakes broke into three patterns: real packages mashed together (think express-mongoose), typo variants of real names, and pure fabrications. Over 205,000 unique hallucinated package names across all runs. That’s a shopping list.

Here’s the part that turns this from a curiosity into a weapon. Same prompt, ten runs, same model: 43% of hallucinated names appeared on every single run. An attacker doesn’t need to guess. Run a few dozen prompts against a popular model, harvest the names that keep showing up, register them on PyPI or npm before anyone else. The hallucinations are targetable.

Cross-ecosystem bleed makes it worse. Almost 9% of Python names the models hallucinated turned out to be valid JavaScript packages, and vice versa. A model thinks it’s recommending a Python library, names something that exists only in npm, and the dev runs pip install on a ghost. Free opening in the wrong registry.

This already works outside the lab. Researcher Bar Lanyado registered huggingface-cli as an empty package on PyPI after watching GPT recommend it. 30,000 downloads in three months. Alibaba copy-pasted the fake install command straight into a public repo’s README.

In January 2026, a hallucinated npm package called react-codeshift spread through 237 repositories via AI-generated agent skill files with nobody deliberately planting it. Slopsquatting now sits alongside model distillation raids and indirect prompt injection as one of the three attack vectors carving through the 2026 AI stack. Both test cases above were caught by researchers. Next time, maybe not.

Vibe coding makes the blast radius worse. Hand the entire dependency list to the model with fewer eyes on verification, and every hallucinated name is a live wire. Higher temperature pushes hallucination rates up. Creative means more slop.

Ghost packages are just one failure mode among many. Hardcoded secrets in AI-generated code ship the credentials. The registry is the next door over.

So what do you actually do about Slopsquatting?

That’s where slopcheck comes in. It’s an open-source CLI I built to sit at the install boundary and check every dependency name against the real registry before pip or npm ever fires. If the package doesn’t exist, it blocks. If it looks sketchy (brand new, zero downloads, hallucination-pattern naming), it flags. If it’s clean, it lets you through. Seven ecosystems, runs in under a second, MIT licensed.

Full technical breakdown is coming up after Karen’s section. But first, she took it for a spin on her own projects. Here’s what that looked like from the chair of someone who actually has to trust the install command.

Karen Spinner, taking slopcheck for a spin:

Catching AI Package Hallucinations Before They Bite

When I use vibe coding tools like Claude Code, my overall approach is “trust but verify.” I personally look at the code and make sure I know what it’s doing before I ship it. And I always keep security in mind as I build.

Coding agents are designed to do what’s fast and expedient, not necessarily what’s best for you and your users. And slopsquatting exploits this behavior. If AI agents would look up tool names instead of guessing, it wouldn’t exist.

But since it does exist, the best approach is to check package names before AI installs them in your project. Doing this manually can be a hassle and force you to switch context in the middle of your building session.

Chris’ slopcheck tool is a convenient way to automate this process. It reads your dependency files as text and checks each package against the real registries over HTTP.

Setting it up

While slopcheck is a Python CLI, it scans across ecosystems, PyPI, npm, crates.io, Go, RubyGems, Maven, and Packagist. I installed it one of my Python virtual environments in about ten seconds:

pip install slopcheck

Running it on a production project

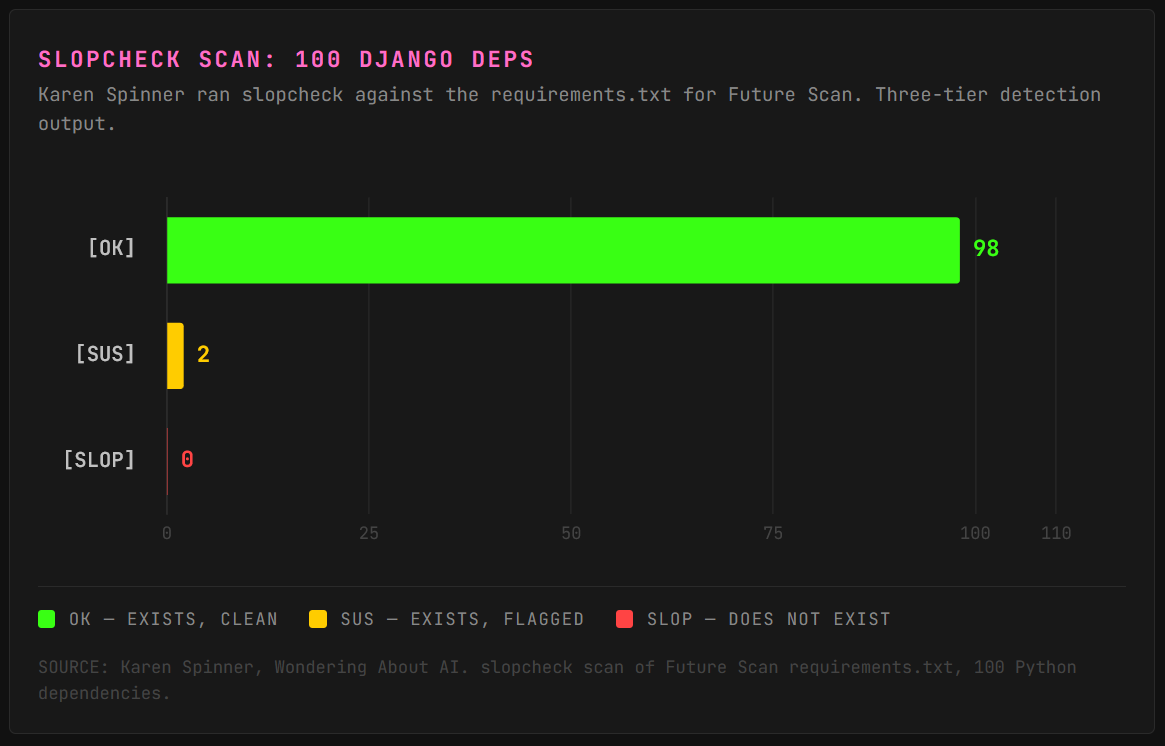

I pointed it at the requirements.txt for Future Scan, a Django project I maintain which includes 100 Python dependencies, a mix of hand-picked packages and transitive deps. The command I used was:

slopcheck scan requirements.txt

It checked all 100 packages in parallel against PyPI and came back in a few seconds. The output is color-coded and easy to scan:

[OK] — Package exists, looks legitimate. 98 of my 100 deps got this.

[SUS] — Package exists but something about it raised a flag. I got two of these.

[SLOP] — Package doesn’t exist in the registry at all. This is the real danger zone; if an LLM told you to install it, someone could register malware under that name tomorrow. (I didn’t get any of these on this project, which was reassuring.)

The false positives were easy to sort out

Both of my [SUS] flags were Levenshtein near-misses. Slopcheck thought they might be typosquats of more popular packages:

hiredis got flagged as suspiciously close to redis:

[SUS] hiredis (pypi)

> Suspiciously close to 'redis'. Could be a typosquat.

? Did you mean: redis

numba got flagged as suspiciously close to numpy:

[SUS] numba (pypi)

> Suspiciously close to 'numpy'. Could be a typosquat.

? Did you mean: numpy

Both are completely legitimate: hiredis is the official C parser for redis-py, and numba is Anaconda’s JIT compiler with tens of millions of monthly downloads.

It also added informational notes on packages like python-dateutil and python-dotenv, calling out the python-* prefix as a “classic LLM naming pattern” but acknowledging both are established.

Did I use it again?

As you can see in the demo, I used it to check my packages.json file in CarouselBot, a React project.

I’ve also added a note for Claude to run slopcheck before it installs new packages and alert me to anything, well, SUS.

One more hassle I can cross off my list!

More from Karen. Wondering About AI covers agentic tools from the builder’s chair. Subscribe for the user-side perspective security folks keep forgetting exists.

Back to Tox.

How slopcheck Catches Hallucinated Packages

slopcheck is a free, open-source CLI that queries every dependency in your project against the live package registry before anything touches your environment. Seven ecosystems out of the box: PyPI, npm, crates.io, Go modules, RubyGems, Maven and Gradle, and Packagist.

# one and done

pip install slopcheck && slopcheck init

The detection logic layers multiple signals instead of trusting a single flag:

[SLOP] is the hard block. The name doesn’t resolve on the registry at all. Do not install.

[SUS] is the yellow light. The package exists but the profile is off: registered in the last seven days, fewer than 100 total downloads, hallucination-pattern naming like

{popular-lib}-helperor{real-pkg}-utils, or no source repository link. Look before you install.[OK] is clean. Established, downloaded, linked to a real repo.

slopcheck also runs a Levenshtein distance check against the most popular packages in each ecosystem, which catches classic typosquats with a “did you mean?” correction. Someone aims for requests, gets `reqeusts`, slopcheck flags it before pip runs.

The modes that matter day to day:

# auto-detect every dep file in the project

slopcheck .

# safe install: verify first, only clean deps reach pip

slopcheck install flask requests sketchy-package

# auto-remove hallucinated packages from dep files

slopcheck . --fix

# pre-commit git hook that blocks slop before every commit

slopcheck init

Safe install mode wraps your real package manager. It checks every name, blocks anything flagged as slop, skips suspicious packages unless you pass --force, and only hands the clean list to pip or npm once the gate is clear. The --fix flag auto-removes hallucinated packages from your dep files, commenting them out with # [slopcheck] removed: so the kill history stays visible in the diff.

Internal packages that won’t exist on public registries? .slopcheck allowlists handle it. CI pipelines? --json output is machine-readable, and a GitHub Action scans every PR that touches dependency files. Slop detected fails the check and drops a report comment directly on the PR. Block at merge time, not at deploy time.

slopcheck is MIT licensed. pip install slopcheck and you’re running. Scans a full project in about a second on most hardware. The code lives on GitHub if you want to read it, fork it, or tear it apart.

The registry is the trust boundary most devs never think about, the same way nobody thought about model weights until pickle files on Hugging Face started shipping backdoors. Every place AI output touches a public ecosystem is a new attack surface.

Karen, closing us out:

A note for fellow builders

I mostly build tools because I love making my life easier for me and my customers. (I’m currently working on a few custom development projects in addition to CarouselBot and Future Scan.)

But I recognize that security, while perhaps less exciting for me, is important too. If something goes wrong, it can damage relationships and businesses.

While slopsquatting is just one of many security issues all of us building with AI need to consider, it’s also one of the easiest to manage once you’re aware of it…and, especially if you use slopcheck.

Follow Karen. Catch her on Substack at @karenspinner1 or subscribe directly to Wondering About AI.

Frequently Asked Questions

What’s the difference between slopsquatting and typosquatting?

Typosquatting waits for a human to mistype a package name. The attacker registers reqeusts and lives off the fat-fingers. Slopsquatting skips the human error entirely. The AI hallucinates the name, the attacker pre-registers it, and the dev copy-pastes the install command without thinking. Registries run collision detection for names similar to existing packages, but hallucinated names are brand-new strings with no collision. The attack scales because the hallucinations are predictable across prompts, models, and ecosystems.

Has slopsquatting been used in a confirmed cyberattack?

No large-scale breach has been publicly pinned to slopsquatting as of 2026. The precursors are real. A harmless test package under the hallucinated name huggingface-cli pulled 30,000 downloads in three months. An npm package called react-codeshift spread through 237 repositories via AI-generated agent infrastructure with nobody planting it deliberately. The gap between proof-of-concept and weaponized supply chain attack is a free registry account and a malicious install hook. That gap is small.

How does slopcheck work across multiple ecosystems?

slopcheck parses dependency files automatically: requirements.txt and pyproject.toml for Python, package.json for JavaScript, Cargo.toml for Rust, go.mod for Go, Gemfile for Ruby, pom.xml and build.gradle for Java, and composer.json for PHP. Every dependency gets checked against its ecosystem’s live registry. The tool runs checks in parallel with ten workers by default, so scanning a full project typically finishes in under a second. Package managers aren’t invoked until the verification gate is clear.

ToxSec is run by an AI Security Engineer with hands-on experience at the NSA, Amazon, and across the defense contracting sector. CISSP certified, M.S. in Cybersecurity Engineering. He covers AI security vulnerabilities, attack chains, and the offensive tools defenders actually need to understand.

Karen Spinner writes Wondering About AI, where she covers agentic AI tools from the chair of someone who uses them daily. She brings the user perspective security researchers forget exists.

as usual, feel free to ask any questions! Huge thanks to Karen for testing this out.

Here for this collab

Would like a package check to apply to my feed: OK, Sus, and Slop same categories apply 😏