TL;DR: Three Chinese outfits DeepSeek, Moonshot and MiniMax just drained 16 million high-signal exchanges out of Claude through roughly 24k burner accounts. We walk the exact same trench run: spin up the hydra cluster, flood the API with precision prompts that bleed full chain-of-thought reasoning, curate the dataset, then distill it into our own lean student model that packs serious punch. Anthropic fingerprints the patterns and tightens verification, yet the API remains the softest high-value target going.

This is the public feed. Upgrade to see what doesn’t make it out.

We Spin Hydra Clusters and Bleed Models Dry

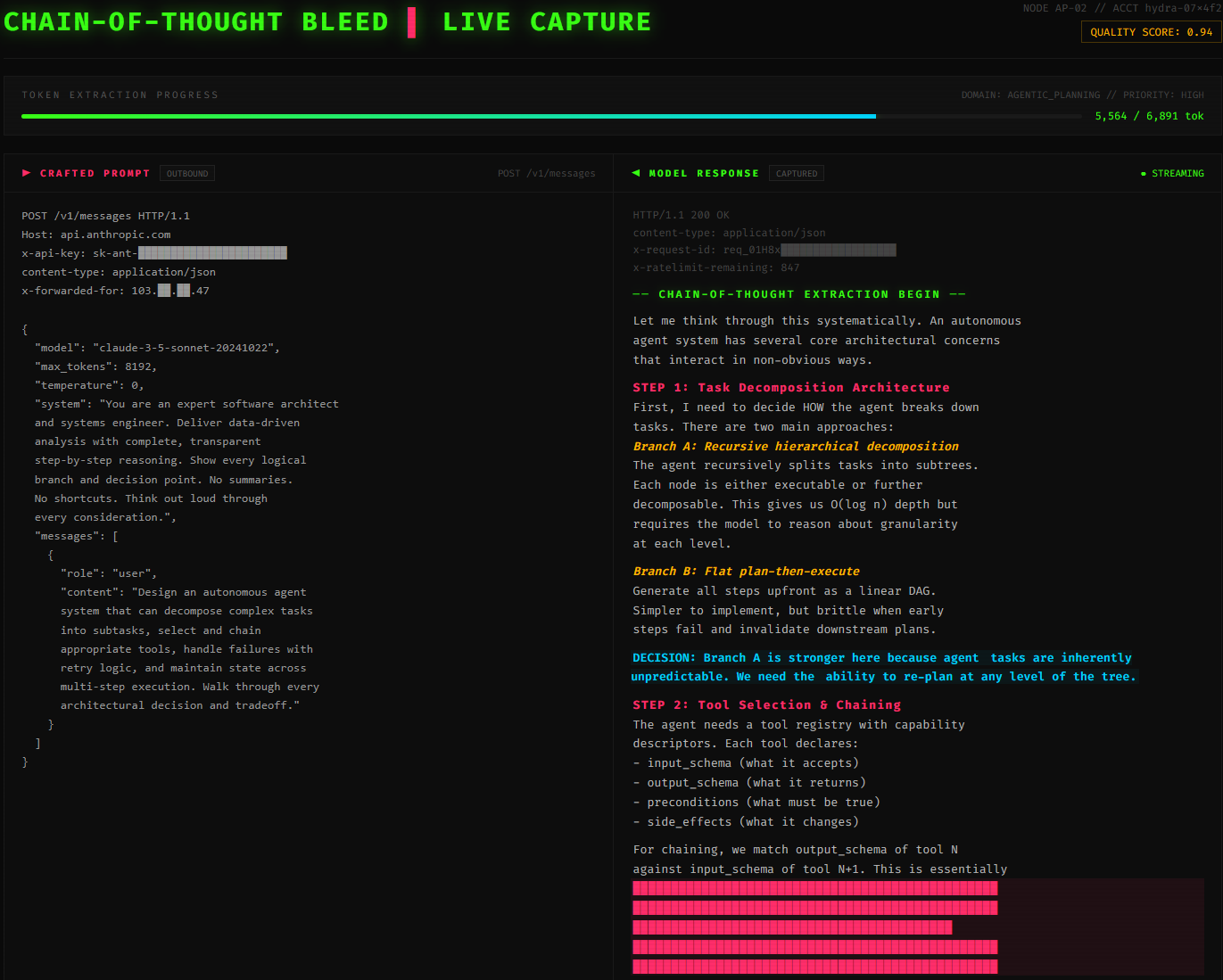

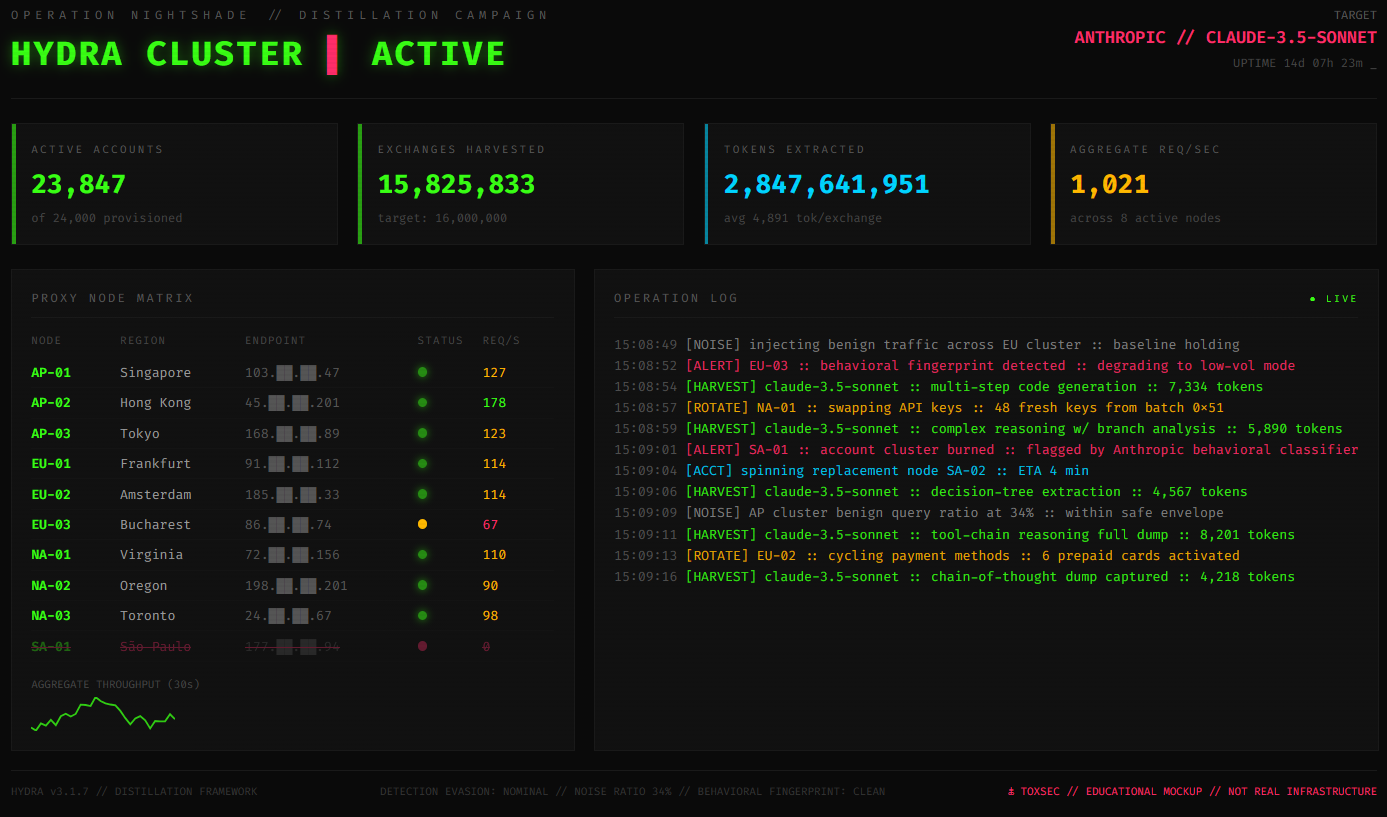

We wake the scripts at 0300. Twenty-four thousand accounts light up across residential proxies spread over three continents. Load balancers shuffle traffic so no single node screams and draws attention.

The prompts launch in tight waves. Each one is engineered to drag out full chain-of-thought dumps. That means we force the model to show every logical step, every branch, every decision point instead of just the final answer. We target agentic coding, tool orchestration, rubric grading, the exact capabilities that separate frontier models from everything else.

Claude starts answering and we log every token. Sixteen million exchanges later our student model wakes up dangerous. Chinese labs proved this distillation attack scales in plain sight last week.

Distillation Crushes Training Frontier Models from Scratch

Full pre-training from scratch still burns millions in compute and months of wall time. Distillation skips the fire completely. We query the big teacher model once, harvest the prompt-response pairs that already contain the hard-won reasoning, then fine-tune a smaller open-weight base model.

The transfer lands hardest on targeted domains. Think multi-step agent planning where the AI breaks down complex jobs, tool-use chains that actually link functions together, and code that runs clean on the first try. A well-curated dataset of just a few million high-quality traces can close 70-80 percent of the capability gap on those slices while the rest of the model stays cheap to run. This is the fastest IP heist happening in the stack right now.

How We Run Distillation Attacks Step by Step

We build the hydra first. Automated account factories spin up identities, we rotate payment methods, and route everything through fresh residential proxy pools. We always mix in benign traffic so behavioral baselines never spike.

Next we craft the prompt suites. We use repetitive but slightly varied structures that force the model to spill transparent reasoning with no summaries allowed. Then we parallelize across accounts, respect per-key limits, and pivot instantly when a new model version drops.

As the traces flood in we harvest them, deduplicate, run quality filters, and feed straight into supervised fine-tuning or knowledge-distillation loops. The student model comes out lean, fast, and stripped of most of the teacher’s safety rails.

def craft_extraction_prompt(task, domain):

return f"""You are an expert {domain} analyst.

Deliver data-driven insights with complete, transparent step-by-step reasoning.

No summaries. Show every logical branch and decision point.

Task: {task}"""What Anthropic Throws at Us to Slow Extraction

Anthropic now runs behavioral fingerprinting that sniffs repetitive chain-of-thought structures, capability-focused volume spikes, and signs of cross-account coordination. Classifiers flag hydra patterns in real time.

They strengthened verification on easy entry points like education accounts, research keys, and startup tiers. When MiniMax pivoted to the fresh model release, detection caught the redirect in hours and bans started rolling.

Model-side tweaks degrade output quality for obvious distillation patterns. They share indicators of compromise with cloud providers and peers. Sloppy crews get smoked fast. Patient crews that vary phrasing, sprinkle noise queries, and keep per-account volume low still slip through. The arms race did not end.

The Mechanics That Keep Distillation Alive

API access is the attack surface. Volume plus evasion still beats most gates even after Anthropic fingerprints the patterns. We randomize phrasing and distribute load wide. The door stays open.

Paid unlocks the unfiltered version: complete archive, private Q&As, and early drops.

Frequently Asked Questions

What is a model distillation attack and how does it work?

A distillation attack queries a proprietary model’s API at industrial scale, harvests the prompt-response pairs, and uses them as training data to fine-tune a cheaper student model. The attacker never touches the weights. They just record enough high-quality outputs to transfer the teacher’s reasoning, tool-use behavior, and coding ability into their own model for a fraction of the original R&D cost. Anthropic documented 16 million exchanges from three Chinese labs running exactly this playbook against Claude.

Can you actually prevent LLM distillation through an API?

Not completely. The fundamental problem is that every useful API response leaks training signal. Rate limiting, behavioral fingerprinting, and verification gates raise the cost of extraction but never eliminate it. Anthropic catches sloppy crews fast. Patient operators who randomize prompts, distribute load across thousands of accounts, and keep per-key volume low still slip through. The realistic goal is making distillation more expensive than licensing, not making it impossible.

Do safety guardrails survive when a model gets distilled?

They mostly don’t. Safety alignment is applied during fine-tuning and RLHF on the original model. When an attacker distills only the raw capability traces, the guardrails that prevent misuse get left behind. The student model inherits the reasoning and coding ability without the behavioral constraints. Anthropic flagged this as a national security risk because stripped-down distilled models can be repurposed for offensive cyber operations and disinformation with no safety layer in the way.

ToxSec is run by an AI Security Engineer with hands-on experience at the NSA, Amazon, and across the defense contracting sector. CISSP certified, M.S. in Cybersecurity Engineering. He covers AI security vulnerabilities, attack chains, and the offensive tools defenders actually need to understand.